This article will cover how to enhance the security of your TIBCO BWCE images by creating a ReadOnlyFileSystem Image for TIBCO BWCE. In previous articles, we have commented on the benefits that this kind of image provides several advantages in terms of security, focusing on aspects such as reducing the attack surface by limiting the kind of things any user can do, even if they gain access to running containers.

This article is part of my comprehensive TIBCO Integration Platform Guide where you can find more patterns and best practices for TIBCO integration platforms.

The same applies in case any malware your image can have will have limited the possible actions they can do without any write access to most of the container.

How ReadOnlyFileSystem affects a TIBCO BWCE image?

This has a clear impact as the TIBCO BWCE image is an image that needs to write in several folders as part of the expected behavior of the application. That’s mandatory and non-dependent on the scripts you used to build your image.

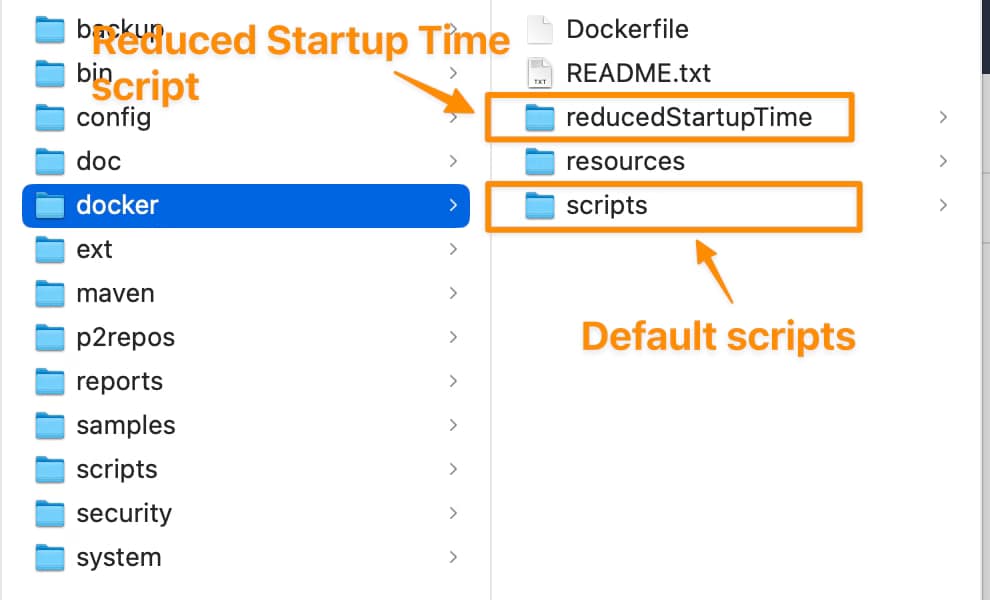

As you probably know, TIBCO BWCE ships two sets of scripts to build the Docker base image: the main ones and the ones included in the folder reducedStartupTime, as you can see in the GitHub page but also inside your docker folder in the TIBCO-HOME after the installation as you can see in the picture below.

The main difference between them is where the unzip of the bwce-runtime is made. In the case of the default script, the unzip is done in the startup process of the image, and in the reducedStartupTime this is done in the building of the image itself. So, you can start thinking that the default ones need some writing access as they need to unzip the file inside the container, and that’s true.

But also, the reduced startupTime requires writing access to run the application; several activities are done regarding unzipping the EAR file, managing the properties file, and additional internal activities. So, no matter what kind of scripts you’re using, you must provide a write-access folder to do this activity.

By default, all these activities are limited to a single folder. If you keep everything by default, this is the /tmp folder, so you must provide a volume for that folder.

How to deploy a TIBCO BWCE application with the

Now, that is clear that you need a volume for the /tmp folder, and now you need to define the kind of volume that you want to use for this one. As you know, there are several kinds of volumes that you can determine depending on the requirements that you have.

In this case, the only requirement is to write access, but there is no need regarding storage and persistency, so, in that case, we can use an emptyDir mode. emptyDir content, which is erased when a pod is removed, is similar to the default behavior but allows writing permission on its content.

To show how the YAML would like, we will use the default one that we have available in the documentation here:

apiVersion: v1

kind: Pod

metadata:

name: bookstore-demo

labels:

app: bookstore-demo

spec:

containers:

- name: bookstore-demo

image: bookstore-demo:2.4.4

imagePullPolicy: Never

envFrom:

- configMapRef:

name: name

So, we will change that to include the volume, as you can see here:

apiVersion: v1

kind: Pod

metadata:

name: bookstore-demo

labels:

app: bookstore-demo

spec:

containers:

- name: bookstore-demo

image: bookstore-demo:2.4.4

imagePullPolicy: Never

securityContext:

readOnlyRootFilesystem: true

envFrom:

- configMapRef:

name: name

volumeMounts:

- name: tmp

mountPath: /tmp

volumes:

- name: tmp

emptyDir: {}

The changes are the following:

- Include the

volumessection with a single volume definition with the name oftmpwith anemptyDirdefinition. - Include a

volumeMountssection for thetmpvolume that is mounted in the/tmppath to allow to write on that specific path to enable also the unzip of the bwce-runtime as well as all the additional activities that are required. - To trigger this behavior, include the

readOnlyRootFilesystemflag in thesecurityContextsection.

Conclusion

Incorporating a ReadOnlyFileSystem approach into your TIBCO BWCE images is a proactive strategy to fortify your application’s security posture. By curbing unnecessary write access and minimizing the potential avenues for unauthorized actions, you’re taking a vital step towards safeguarding your containerized environment.

This guide has unveiled the critical aspects of implementing such a security-enhancing measure, walking you through the process with clear instructions and practical examples. With a focus on reducing attack vectors and bolstering isolation, you can confidently deploy your TIBCO BWCE applications, knowing that you’ve fortified their runtime environment against potential threats.