GraalVM provides the capabilities to make Java a first-class language to create microservices at the same level as Go-Lang, Rust, NodeJS, and others.

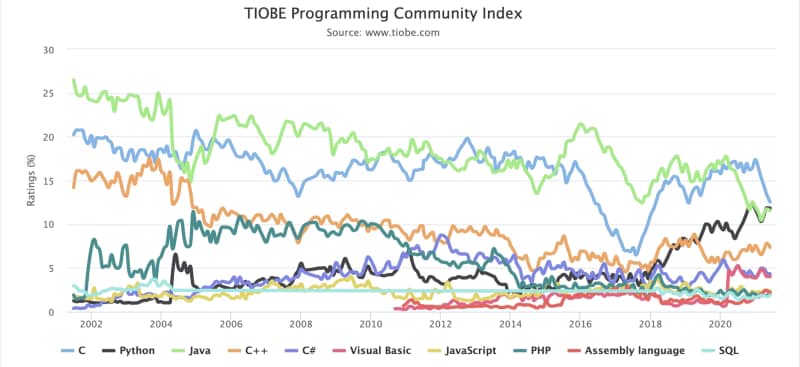

Java language has been the language leader for generations. Pretty much every piece of software has been created with Java: Web Servers, Messaging System, Enterprise Application, Development Frameworks, and so on. This predominance has been shown in the most important indexes like the TIOBE index, as is shown below:

But always, Java has some trade-offs that you need to make. The promise of the portability code is because the JVM allows us to run the same code in different OS and ecosystems. Still, at the same time, the interpreter approach will also provide a little bit of overhead compared with other compiled options like C.

That overhead was never a problem until we go inside the microservices route. When we have a server-based approach with an overhead of 100–200 MB, it is not a big problem compared with all the benefits it provides. Still, if we transform that server into, for example, hundreds of services, and each of them has a 50 MB overhead, this starts to become something to worry about.

Another trade-off was start-up time, again the abstraction layer provides a slower start-up time, but in client-service architecture, that was not an important issue if we need few more seconds to start serving requests. Still, today in the scalability era, this becomes critical if we talk about second-based startup time compared with milliseconds-based startup time because this provides better scalability and more optimized infrastructure usage.

So, how to provide all the benefits from Java and provide a solution for these trade-offs that were now starting to be an issue? And GraalVM becomes to be the answer to all of this.

GraalVM is based on its own words: “a high-performance JDK distribution designed to accelerate the execution of applications written in Java and other JVM languages,” which provides an Ahead-of-Time Compilation process to generate binary process from Java code that removes the traditional overhead from the JVM running process.

Regarding its use in microservices, this is a specific focus that they have given, and the promise of around 50x faster startup and 5x less memory footprint is just amazing. And this is why GraalVM becomes the foundation for high-level microservice development frameworks in Java-like Quarkus from RedHat, Micronaut, or even the Spring-Boot version powered by GraalVM.

So, probably you are just asking: How can I start using this? The first thing that we need to do is to go to the GitHub release page of the project and find the version for our OS and follow the instructions provided here:

When we have this installed, this is the moment to start testing it, and what better of doing so than creating a REST/JSON service and comparing it with a traditional OpenJDK 11-powered solution?

To create this REST service as simple as possible to focus on the difference between both modes, I will use the Spark Java Framework which is a minimal framework to create REST Services.

I will share all the code in this GitHub repository, so if you would like to take a look, clone it from here:

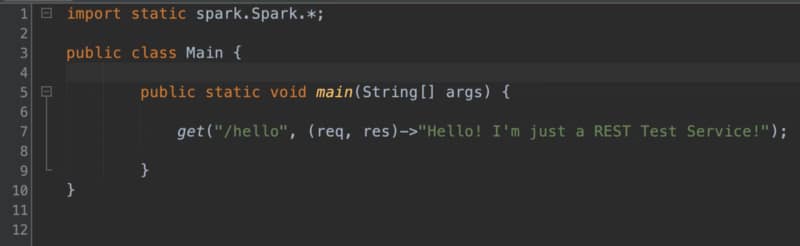

The code that we are going to use looks very simple, just a single line to create a REST service:

Then, we will use a GraalVM maven plugin for all the compilation processes. You can check all the options here:

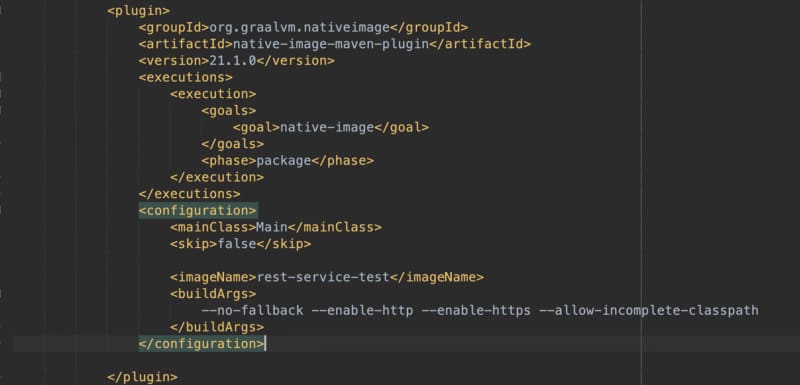

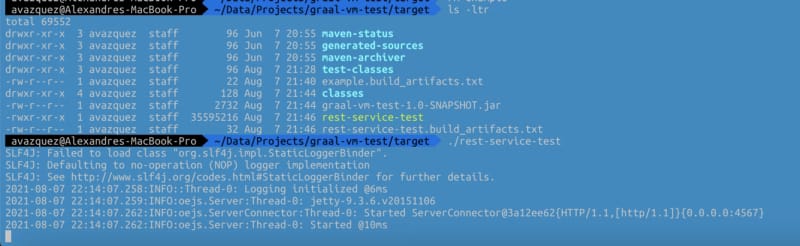

The compilation process takes a while (around 1–2 min). Still, you need to understand that this compiles everything to a binary process because the only output you will get out of this is a single binary process (named in my case rest-service-test) that will have all the things you need to run your application.

And finally, we will have a single binary that is everything that we need to run our application:

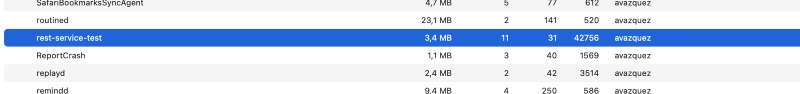

This binary is an exceptional one because it does not require any JVM on your local machine, and it can start in a few milliseconds. And the total size of the binary is 32M on disk and less than 5MB of RAM.

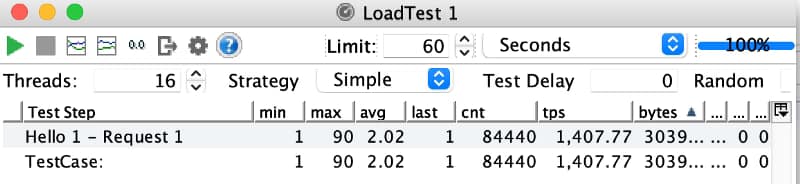

The output of this first tiny application is straightforward, as you saw, but I think you can get the point. But let’s see it in action I will launch a small load test with my computer with 16 threads launching requests to this endpoint:

As you can see, this is just incredible, even with the lack of latency as this is just triggered by the same machine we are reaching with a single service a rate of TPS in 1 minute of more than 1400 requests/sec with a response time of 2ms for each of those.

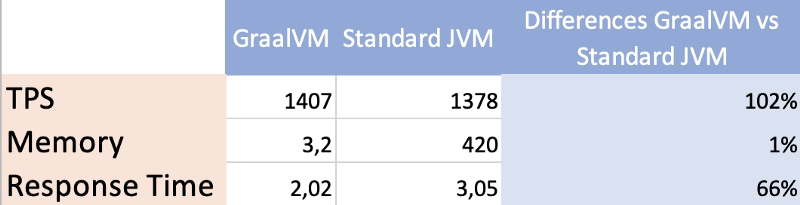

And how does that compare with a normal JAR-based application with the same code exactly? For example, you can see in the table below:

In a nutshell, we have seen how using tools such as GraalVM we can make our JVM-based programs ready for our microservices environment avoiding the normal issues regarding high-memory footprint or small startup time that are critical when we are adopting a full cloud-native strategy in our companies or projects.

But, the truth must be told. This is not always as simple as we showed on this sample because depending on the libraries you are using, generating the native image can be much more complex and require a lot of configuration or just be impossible. So it is not everything already done but the future looks bright and full of hope.