If You Aren’t Careful You Can Block The Scalability Of Your Workloads

Photo by Joshua Hoehne on Unsplash

One of the great things about container-based developments idefiningne isolation spaces where you have guaranteed resources such as CPU and memory. This is also extended on Kubernetes-based environments at the namespace level, so you can have different virtual environments that cannot exceed the usage of resources at a specified level.

To define that you have the concept of Resource Quota that works at the namespace level. Based on its own definition (https://kubernetes.io/docs/concepts/policy/resource-quotas/)

A resource quota, defined by a ResourceQuota object, provides constraints that limit aggregate resource consumption per namespace. It can limit the quantity of objects that can be created in a namespace by type, as well as the total amount of compute resources that may be consumed by resources in that namespace.

You have several options to define the usage of these Resource Quotas but we will focus on this article on the main ones as follows:

- limits.cpu: Across all pods in a non-terminal state, the sum of CPU limits cannot exceed this value.

- limits.memory: Across all pods in a non-terminal state, the sum of memory limits cannot exceed this value.

- requests.cpu: Across all pods in a non-terminal state, the sum of CPU requests cannot exceed this value.

- requests.memory: Across all pods in a non-terminal state, the sum of memory requests cannot exceed this value.

So you can think that this is a great option to define a limit.cpu and limit.memory quota, so you make sure that you will not extend that amount of usage by any means. But you need to be careful about what this means, and to illustrate that I will use a sample.

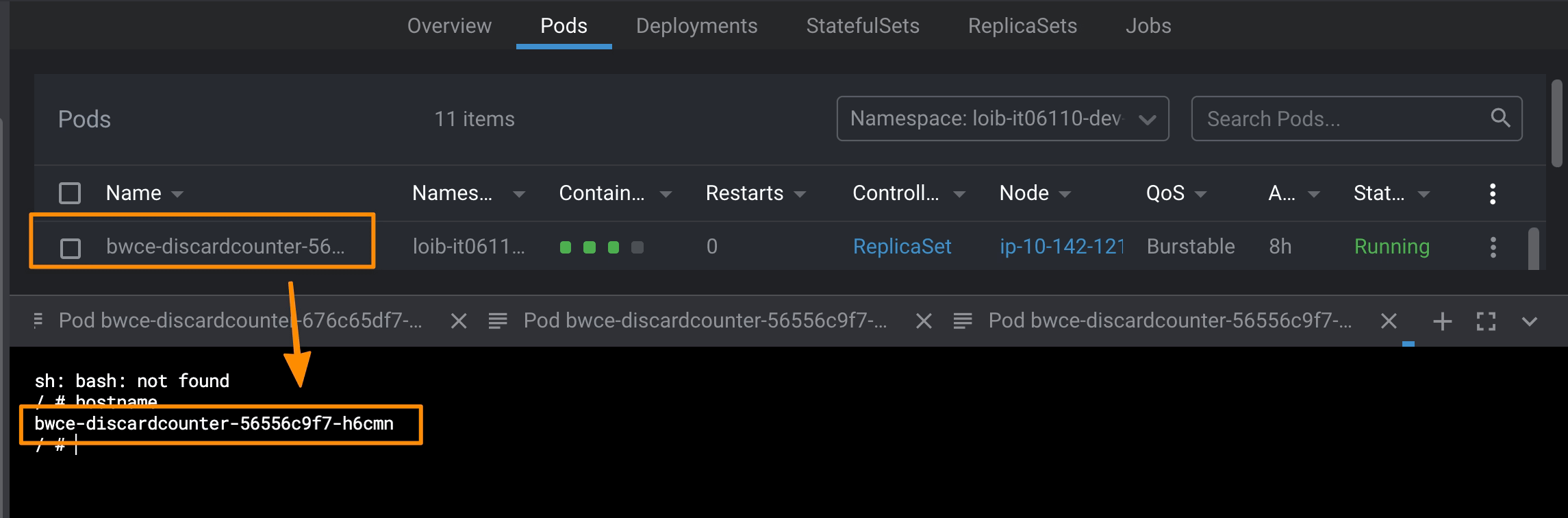

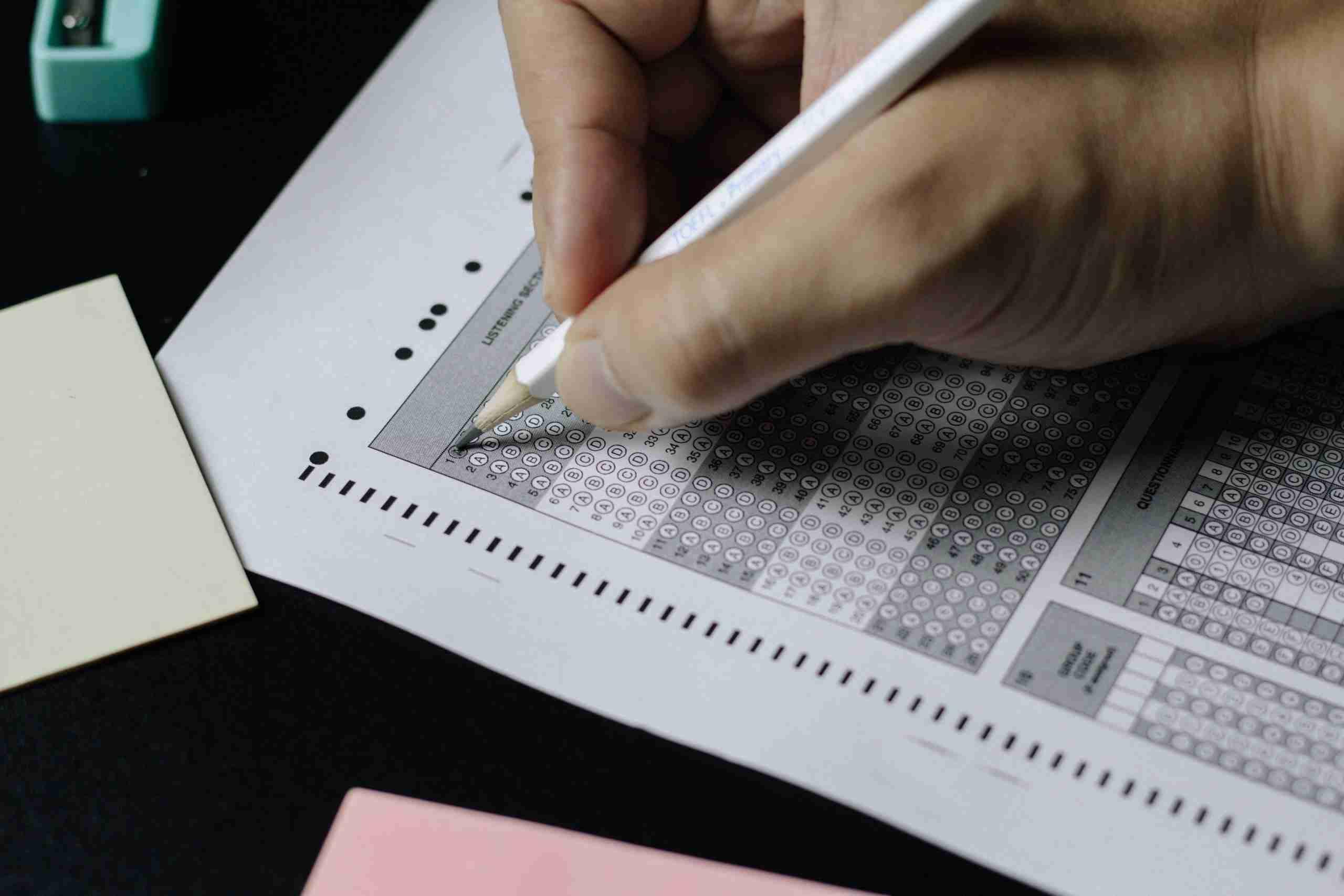

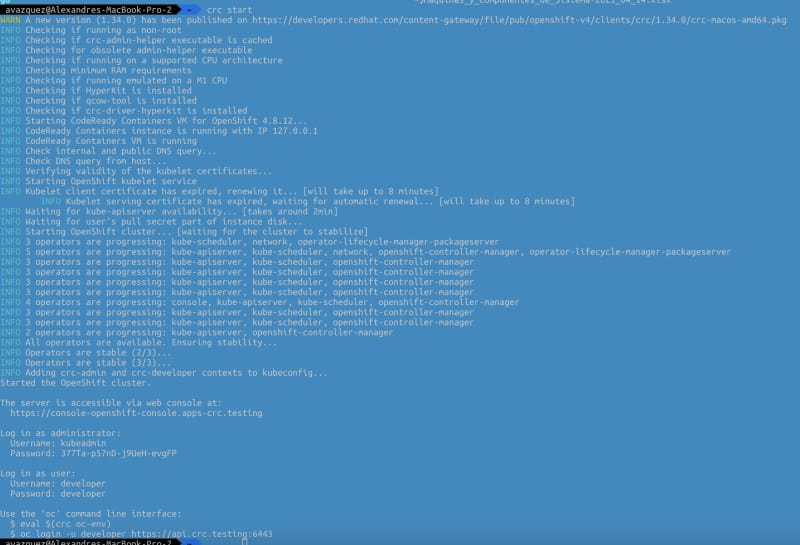

I have a single workload with a single pod with the following resource limitation:

- requests.cpu: 500m

- limits.cpu: 1

- requests.memory: 500Mi

- limits.memory: 1 GB

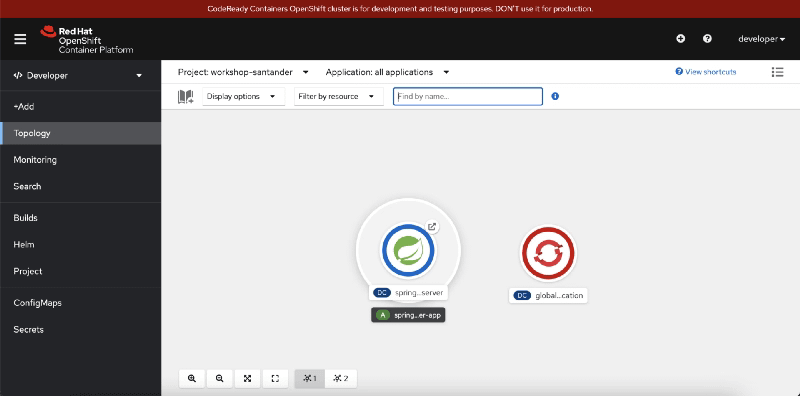

Your application is a Java-based application that exposes a REST Service ant has configured a Horizontal Pod Autoscaler rule to scale when the amount of CPU exceeds its 50%.

So, we start in the primary situation: with a single instance that requires to run 150 m of vCPU and 200 RAM, so a little bit less than 50% to avoid the autoscaler. But we have a Resource Quota about the limits of the pod (1 vCPU and 1 GB) so we have blocked that. We have more requests and we need to scale to two instances. To simplify the calculations, we will assume that we will use the same amount of resources for each of the instances, and we will continue that way until we reach 8 instances. So lets’ see how it changes the limits defined (the one that will limit the number of objects I can create in my namespace) and the actual amount of resources that I am using:

So, for resources used amount of 1.6 vCPU I have blocked 8 vCPU, and in case that was my Resource Limit, I cannot create more instances, even though I have 6.4 vCPU not used that I have allowed deploying because of this kind of limitation I cannot do it.

Yes, I am able to ensure the principle that I never will use more than 8 vCPU, but I’ve been blocked very early on that trend affecting the behavior and scalability of my workloads.

Because of that, you need to be very careful when you are defining these kinds of limits and making sure what you are trying to achieve because to solve one problem you can be generating another one.

I hope this can help you to prevent this issue for happening in your daily work or at least to keep it in mind when you are facing similar scenarios.

📚 Want to dive deeper into Kubernetes? This article is part of our comprehensive Kubernetes Architecture Patterns guide, where you’ll find all fundamental and advanced concepts explained step by step.