Your pods are being OOMKilled at 3 AM. Your latency p99 spikes every few minutes with no obvious cause. Your cluster scheduler is placing workloads on nodes that can’t sustain them. In most production Kubernetes incidents, misconfigured resource requests and limits are either the direct cause or an accelerating factor.

This is not a “what are requests and limits” tutorial. It is a deep technical guide for engineers who run Kubernetes in production and need to understand what actually happens inside the kernel when these values are set — and what the consequences are when they are wrong.

What Requests and Limits Actually Are

The Kubernetes documentation explains requests and limits at the API level. What it underexplains is the enforcement mechanism: cgroups.

When the kubelet admits a pod onto a node, it creates a cgroup hierarchy for that pod under /sys/fs/cgroup/. Each container in the pod gets its own cgroup. The values you set in your pod spec translate directly into cgroup parameters:

CPU request → cpu.shares (cgroups v1) or cpu.weight (cgroups v2)

CPU limit → cpu.cfs_quota_us and cpu.cfs_period_us

Memory request → memory.soft_limit_in_bytes (advisory, used for eviction scoring)

Memory limit → memory.limit_in_bytes (hard enforcement, triggers OOMKill)

The scheduler uses requests to make placement decisions. It does not know about actual utilization — it knows about committed capacity. A node with 4 cores where running pods have a total CPU request of 3.5 cores has 0.5 cores of schedulable capacity remaining, even if actual CPU utilization is 15%.

This is why you can have a fully “utilized” cluster (by requests) where nodes are idle, and why you can have nodes at 95% CPU utilization that still accept new pods because their requests are low.

The kubelet uses limits to enforce runtime constraints via those cgroup parameters. The scheduler never sees limits.

CPU vs Memory: Why They Behave Fundamentally Differently

This is the most consequential thing to understand about Kubernetes resource management, and it is routinely misunderstood even by experienced engineers.

CPU Is Compressible

CPU is a time-shared resource. If your container tries to use more CPU than its limit allows, the Linux CFS scheduler simply throttles it — it stops getting CPU time until the next scheduling period. The process continues. It just waits.

From the application’s perspective: things slow down. Latency increases. Throughput drops. But the process does not die.

Memory Is Not Compressible

Memory is not time-shared. If your container tries to allocate memory beyond its limit, there is no “slow down” path. The Linux OOM killer selects a process in the cgroup and kills it. The container dies.

From the application’s perspective: the process is terminated. Kubernetes restarts the container. You see OOMKilled in kubectl describe pod.

| Property | CPU | Memory |

|---|---|---|

| Enforcement | CFS throttling | OOM Kill |

| Process survives? | Yes (degraded performance) | No (killed and restarted) |

| Compressible? | Yes | No |

| Scheduler visibility | Requests only | Requests only |

| Over-limit consequence | Latency spikes | Container restart |

| Setting limits: recommended? | Situational (see below) | Always |

This asymmetry drives every recommendation in the rest of this guide.

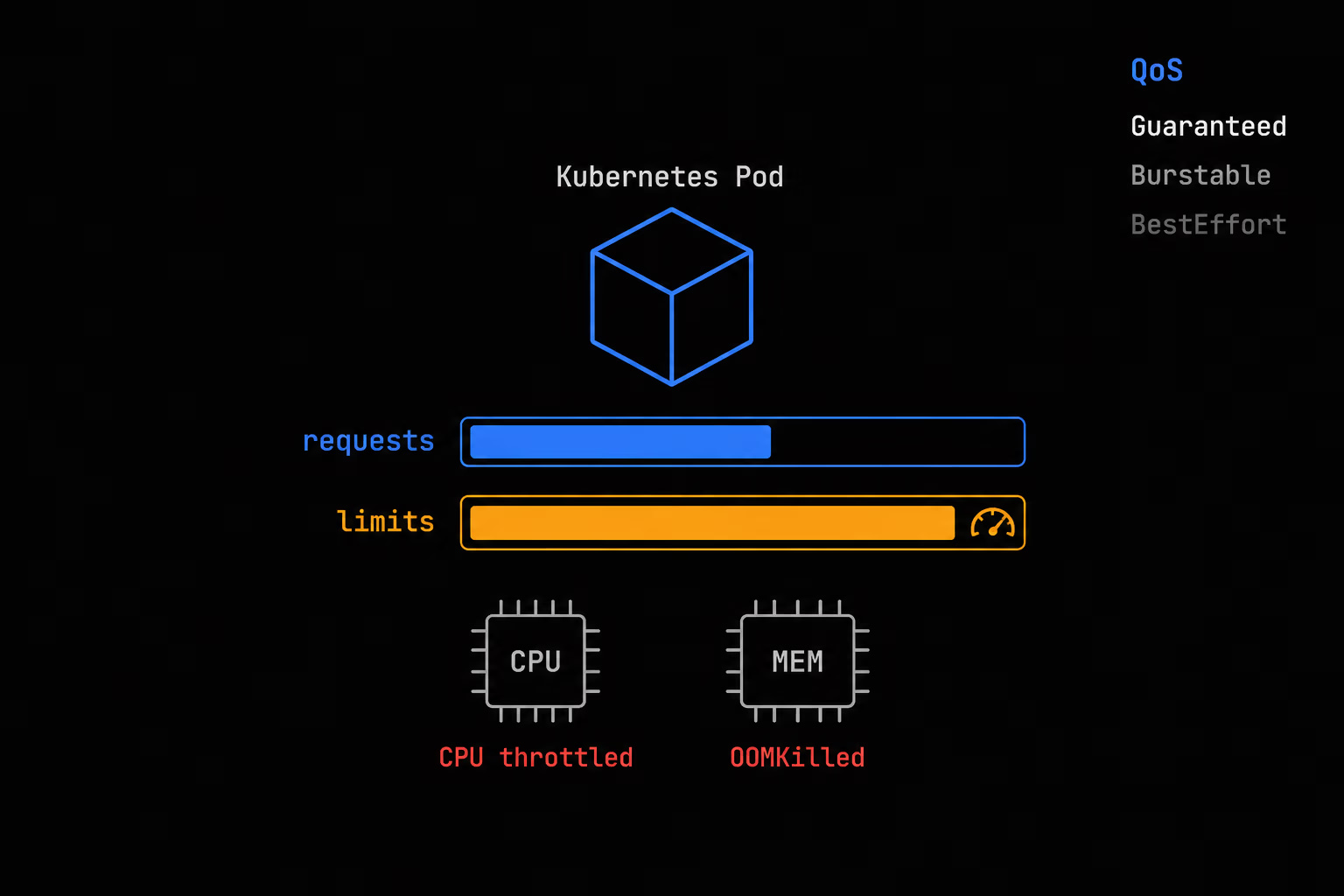

QoS Classes: Eviction Priority Under Pressure

Kubernetes assigns each pod a Quality of Service (QoS) class based on the requests and limits set across all its containers. This class determines eviction priority when a node is under memory pressure.

Guaranteed

Condition: Every container has CPU and memory requests and limits set, and requests equal limits for both CPU and memory.

resources:

requests:

cpu: "500m"

memory: "512Mi"

limits:

cpu: "500m"

memory: "512Mi"

Guaranteed pods are the last to be evicted. The kubelet will exhaust BestEffort and Burstable pods before touching these. They get the most predictable resource allocation on the node.

Warning: Guaranteed does not mean “always available.” It means “last to be killed.” On a heavily overloaded node, even Guaranteed pods can be evicted.

Burstable

Condition: At least one container has a CPU or memory request or limit set, but the pod does not meet Guaranteed criteria.

resources:

requests:

cpu: "250m"

memory: "256Mi"

limits:

cpu: "1000m"

memory: "1Gi"

Burstable pods are evicted after BestEffort but before Guaranteed. They can burst above their request when capacity is available, but they are not protected when the node is under pressure.

BestEffort

Condition: No container in the pod has any CPU or memory requests or limits set.

# No resources block at all

BestEffort pods are evicted first, always. They get whatever capacity is left over after scheduled workloads consume their requested share. On a loaded node, they may be starved entirely.

In production: never run stateful workloads or business-critical services as BestEffort. The Kubernetes scheduler will place them anywhere, and the kubelet will kill them first.

Common Misconfiguration Patterns and Their Consequences

Pattern 1: No Requests or Limits Set

Effect: BestEffort QoS. First to be evicted under memory pressure. Scheduler places pods arbitrarily — it has no data for placement decisions, so it defaults to LeastRequestedPriority, which effectively means these pods may land on the same nodes as heavily-loaded workloads.

Real consequence: Your “lightweight” background jobs kill your API servers at 3 AM when a memory spike triggers eviction and BestEffort pods happen to be sitting next to them on the same node.

Pattern 2: Requests Equal Limits (Guaranteed QoS)

This is the common “safe” pattern recommended in older Kubernetes documentation. It is not wrong, but it has a trap:

CPU limits = CPU requests means CPU throttling is guaranteed to trigger. Your pod will be throttled the moment it tries to burst above the request — during startup, during GC, during a traffic spike — even if the node has abundant free CPU.

For latency-sensitive applications, this means predictable throttling spikes at exactly the moments you need the most CPU.

Memory: Setting memory request = memory limit is appropriate and recommended. The behavior is correct: the pod runs in a controlled memory budget.

Pattern 3: Limits Much Higher Than Requests (Burstable with High Ratio)

resources:

requests:

cpu: "100m"

memory: "128Mi"

limits:

cpu: "4000m"

memory: "4Gi"

This is the opposite extreme. The scheduler thinks this pod needs 100m CPU and 128Mi memory. Dozens of these can be scheduled onto a single node. When they all burst simultaneously — which they will, during a deployment, a traffic event, or a GC cycle — the node is overloaded, memory pressure triggers OOMKill cascades, and the scheduler has no idea anything is wrong because the committed capacity (by requests) looks fine.

The limit:request ratio matters. A 10x or 20x memory limit:request ratio on many pods is a recipe for node instability. A reasonable starting point is 2x–4x for memory, less for CPU.

Pattern 4: CPU Limits Set to “Be Safe”

This is the subtlest misconfiguration and the one with the most hidden latency impact. We cover it in depth in the next section.

The CPU Throttling Problem: CFS Bandwidth and Hidden Latency

This is where many production Kubernetes deployments have a silent performance problem they cannot easily diagnose.

How CFS Bandwidth Throttling Works

The Linux Completely Fair Scheduler (CFS) enforces CPU limits using bandwidth control. The relevant parameters are:

cpu.cfs_period_us: the accounting period, default 100mscpu.cfs_quota_us: how many microseconds of CPU time the cgroup can use per period

If you set cpu: "500m" as a limit, Kubernetes sets cpu.cfs_quota_us = 50000 (50ms per 100ms period). This means the container can use at most 50% of one CPU core per 100ms window.

The problem: quota is enforced per period, not as a moving average. If your container uses its full 50ms allocation in the first 60ms of a period, it is throttled for the remaining 40ms — even if the node has 7 idle CPUs. The CPU sits idle. Your container waits.

Why This Causes Latency Spikes Even at Low Utilization

This is counterintuitive and the source of many production mysteries. You can have a container running at 10% average CPU utilization that is regularly throttled, because its instantaneous CPU usage within a single 100ms window exceeds its quota.

Java applications with JVM garbage collection are particularly vulnerable. GC causes a CPU burst of short duration. If that burst exceeds the per-period quota, the GC pause is extended artificially by throttling — even though the GC event itself would have been short.

The same applies to Node.js event loop processing, Python import at startup, and any application that has bursty CPU behavior (which is most of them).

The Cloudflare and Netflix Evidence

Cloudflare published findings showing that CPU throttling was responsible for significant tail latency increases in their containerized workloads, and that removing CPU limits reduced p99 latency substantially for services that appeared to have headroom. Netflix has documented similar patterns in their capacity planning work, noting that per-period quota enforcement does not model real application CPU behavior accurately.

The kernel community has been aware of this for years. The fix — moving to cgroups v2 with better scheduler integration — helps but does not eliminate the problem. Kubernetes 1.25+ with cgroups v2 nodes experience less throttling under the same limits, but the fundamental issue remains: CPU limits throttle bursty applications unpredictably.

The Recommendation: Consider Not Setting CPU Limits

This is controversial but grounded in the evidence:

For latency-sensitive services: do not set CPU limits. Set CPU requests accurately and rely on the scheduler for placement.

The argument:

– CPU throttling is a soft failure mode that is hard to observe and diagnose

– OOMKill is a hard failure mode that is visible and recoverable

– CPU requests give the scheduler accurate placement data without creating throttling

– Nodes handle CPU oversubscription gracefully through time-sharing; they do not handle memory oversubscription gracefully

When to still set CPU limits:

– Multi-tenant clusters where noisy neighbor isolation is critical

– Batch workloads where predictable CPU allocation matters more than latency

– When your monitoring and alerting can catch CPU starvation at the node level

When you do not set CPU limits, you must set CPU requests accurately. A request of 100m for a service that normally uses 800m means the scheduler places it on a node that cannot actually sustain it. The result is real CPU starvation, not artificial throttling — but it is CPU starvation nonetheless.

Memory: Always Set Limits

The contrast with CPU is direct. Memory is non-compressible. A container that leaks memory or has a runaway allocation will consume all available node memory if unconstrained. This does not degrade gracefully — it triggers the OOM killer, which may kill unrelated processes on the node.

Always set memory limits. Always.

The consequence — OOMKill — is visible, logged, and Kubernetes handles it by restarting the container. An OOMKilled exit code is actionable: you either have a memory leak, your limit is too low, or your sizing methodology is wrong. All three are diagnosable.

The alternative — no memory limit — means a single leaking pod can destabilize an entire node and trigger eviction cascades affecting unrelated workloads.

Set memory requests equal to the p95 steady-state usage of your application. Set memory limits at 1.5x–2x the request to absorb traffic spikes and GC pressure. Profile your application under load to establish these baselines.

Vertical Pod Autoscaler (VPA)

VPA is the Kubernetes component designed to solve the sizing problem automatically. It observes actual resource utilization and recommends (or applies) adjusted requests.

How VPA Works

VPA has three components:

- Recommender: Watches historical metrics and computes recommended requests based on observed utilization. Does not modify pods.

- Updater: Evicts pods whose current requests differ significantly from recommendations (when VPA mode is Auto or Recreate).

- Admission Controller: Mutates pod specs at admission time to apply recommendations from the Recommender.

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: api-server-vpa

namespace: production

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: api-server

updatePolicy:

updateMode: "Off" # Recommend only — do not evict pods

resourcePolicy:

containerPolicies:

- containerName: api-server

minAllowed:

cpu: 100m

memory: 128Mi

maxAllowed:

cpu: 4000m

memory: 4Gi

controlledResources: ["cpu", "memory"]

controlledValues: RequestsAndLimits

VPA Modes

| Mode | Behavior |

|---|---|

Off | Compute recommendations only. No pod mutations. |

Initial | Apply recommendations to new pods only. Do not evict running pods. |

Recreate | Evict pods when recommendations change significantly. |

Auto | Currently equivalent to Recreate. May change in future versions. |

When to Use VPA

Right-sizing during initial rollout: Run VPA in Off mode for 1–2 weeks on a new service. Review recommendations before applying. This is the most valuable use case.

Services with unpredictable or seasonal load patterns: VPA adapts requests based on observed behavior. Combined with HPA for horizontal scaling, this gives you right-sized replicas that scale out horizontally.

VPA and HPA cannot both manage the same metric. If HPA is scaling on CPU utilization, do not use VPA with controlledValues: RequestsAndLimits for CPU — they will fight each other. Use controlledValues: RequestsOnly and let HPA manage scale.

VPA limitations:

– Requires pod restarts to apply recommendations (Updater evicts pods)

– Does not work well with stateful workloads in strict availability windows

– Recommender needs sufficient history (at least a few days) to produce reliable recommendations

– Does not account for traffic spikes that haven’t been observed yet

LimitRange and ResourceQuota: Namespace-Level Guardrails

Requests and limits on individual pods solve the per-workload problem. LimitRange and ResourceQuota solve the namespace and cluster-level governance problem.

LimitRange

LimitRange sets default requests and limits for containers in a namespace, and enforces minimum/maximum boundaries. Any pod admitted to the namespace that does not have explicit requests/limits set will receive the defaults.

apiVersion: v1

kind: LimitRange

metadata:

name: default-limits

namespace: production

spec:

limits:

- type: Container

default:

cpu: "500m"

memory: "512Mi"

defaultRequest:

cpu: "100m"

memory: "128Mi"

max:

cpu: "4000m"

memory: "8Gi"

min:

cpu: "50m"

memory: "64Mi"

- type: Pod

max:

cpu: "8000m"

memory: "16Gi"

- type: PersistentVolumeClaim

max:

storage: "50Gi"

min:

storage: "1Gi"

Key behaviors:

– default applies as the limit for containers that set a request but no limit

– defaultRequest applies as the request for containers that set no request

– max and min cause admission to fail if violated

– LimitRange applies at admission time — changing it does not affect running pods

Use LimitRange to:

– Prevent BestEffort pods from being admitted (by setting defaultRequest values)

– Enforce organizational standards for minimum resource specifications

– Protect the cluster from pods requesting unbounded resources

ResourceQuota

ResourceQuota limits the total amount of resources that can be consumed by all pods in a namespace. This is the multi-tenant governance tool.

apiVersion: v1

kind: ResourceQuota

metadata:

name: production-quota

namespace: production

spec:

hard:

requests.cpu: "20"

requests.memory: "40Gi"

limits.cpu: "40"

limits.memory: "80Gi"

pods: "100"

persistentvolumeclaims: "20"

requests.storage: "500Gi"

count/deployments.apps: "50"

count/services: "50"

count/secrets: "100"

count/configmaps: "100"

Critical interaction with LimitRange: When ResourceQuota is active in a namespace, every pod must have requests and limits set or it will be rejected. This is why LimitRange defaults are important — they ensure pods without explicit resources are not rejected by the quota system.

Use ResourceQuota to:

– Enforce team/application resource budgets in shared clusters

– Prevent runaway deployments from consuming all cluster capacity

– Implement chargeback policies (track resource consumption per namespace)

Practical Sizing Methodology

Step 1: Instrument Before You Set Values

Deploy initially with only requests set (no CPU limits, memory limits set conservatively high) and monitor for 2–4 weeks under realistic load.

Useful PromQL queries for sizing:

# p95 CPU usage over the last 7 days

histogram_quantile(0.95,

rate(container_cpu_usage_seconds_total{

container="api-server",

namespace="production"

}[5m])

)

# p99 memory working set over the last 7 days

quantile_over_time(0.99,

container_memory_working_set_bytes{

container="api-server",

namespace="production"

}[7d]

)

# CPU throttling ratio (alert if >5%)

rate(container_cpu_cfs_throttled_seconds_total{container="api-server"}[5m])

/

rate(container_cpu_cfs_periods_total{container="api-server"}[5m])

Step 2: Set CPU Requests from p95 Observations

Set CPU request = p95 CPU usage under realistic production load. For latency-sensitive services: do not set CPU limits. For batch or background jobs: set CPU limits at 2x–4x the request.

Step 3: Set Memory Requests and Limits

Set memory request = p95 memory working set over at least 7 days. Set memory limit = max(observed peak, 1.5 × request). For Java/Python with large processing, use 2x.

# Production example: Java microservice

resources:

requests:

cpu: "500m" # p95 observed: ~420m

memory: "768Mi" # p95 observed: ~680Mi

limits:

# No CPU limit — latency-sensitive service

memory: "1.5Gi" # 2x request, covers GC pressure

Step 4: Use VPA Recommendations to Validate

Run VPA in Off mode alongside your manually-set values. After 1–2 weeks, compare VPA recommendations to your current settings.

Step 5: Adjust for Workload Lifecycle Events

Account for: JVM warmup at startup (CPU spike 3–10x steady-state), rolling deployment overlap (namespace quota headroom), and known traffic peaks (size to peak, not average).

Decision Framework: What to Set Based on Workload Type

| Workload Type | CPU Request | CPU Limit | Memory Request | Memory Limit | QoS Target |

|---|---|---|---|---|---|

| Latency-sensitive API (Go, Java, Node) | p95 observed | Do not set | p95 observed | 1.5–2x request | Burstable |

| Batch / background jobs | p50 observed | 2–4x request | p95 observed | 1.5x request | Burstable |

| System-critical (coredns, metrics-server) | Conservative | Equal to request | Conservative | Equal to request | Guaranteed |

| Stateful / databases (in-cluster) | p95 observed | Do not set | p99 observed | 1.25x request | Burstable |

| Dev/test workloads | Low (100m) | 2x request | Low (128Mi) | 2x request | Burstable |

| Sidecar containers (envoy, otel-collector) | Profile individually | Contextual | Profile individually | 1.5x request | Matches primary |

Monitoring and Alerting

# OOMKill rate

- alert: ContainerOOMKilled

expr: increase(kube_pod_container_status_last_terminated_reason{reason="OOMKilled"}[5m]) > 0

for: 0m

labels:

severity: warning

# CPU throttling >10%

- alert: CPUThrottlingHigh

expr: |

rate(container_cpu_cfs_throttled_seconds_total[5m])

/

rate(container_cpu_cfs_periods_total[5m])

> 0.10

for: 5m

labels:

severity: warning

# Memory near limit >85%

- alert: MemoryNearLimit

expr: |

container_memory_working_set_bytes

/

(container_spec_memory_limit_bytes > 0)

> 0.85

for: 5m

labels:

severity: warning

FAQ

Q: My Java application keeps getting OOMKilled but I’ve set limits at 2x average usage. What am I missing?

The JVM heap (-Xmx) is not the only memory consumer. Off-heap buffers, Metaspace, thread stacks, and JVM overhead add 25–40% on top. Set -Xmx at ~75% of your container memory limit. For a 1Gi limit: -Xmx768m is a safe starting point.

Q: Should I set the same resources in all environments?

No. Dev/test can use lower values. But the ratio between request and limit should be similar, and the resource profile should be close enough to catch misconfigurations before production.

Q: Can I use HPA and VPA together?

Yes, carefully. Use HPA for replica scaling (CPU or custom metrics) and VPA in Off mode or controlledValues: RequestsOnly for right-sizing guidance. Never have both managing the same metric simultaneously.

Q: My cluster uses cgroups v2. Does CPU throttling still apply?

Improved but not eliminated. cgroups v2 uses a weight-based scheduler that reduces throttling artifacts. However, cpu.cfs_quota_us enforcement still exists when CPU limits are set. For latency-sensitive workloads, the case for not setting CPU limits remains valid on cgroups v2.

Q: What is a realistic cluster overcommit ratio?

CPU: 5–10x overcommit (total requests vs physical cores) is common for mixed workloads with accurate requests. Memory: 1.5–2x cluster-level overcommit is manageable at 1.5x request:limit ratios. Beyond 2x, node memory pressure events become frequent.

Q: LimitRange is set but pods are still admitted without resources. Why?

LimitRange defaults only apply to containers with no resource specification at all. If a container specifies requests.cpu but not limits.cpu, the LimitRange default for CPU does not fill in the missing limit. Also verify the LimitRange is in the correct namespace: kubectl get limitrange -n <namespace>.

Q: What does a pod with no memory limit do to a node?

It can consume all available node memory unconstrained. This triggers the Linux OOM killer at the node level, which may kill processes outside the container — including the kubelet itself in extreme cases. Memory limits are non-negotiable in production.

Tested against Kubernetes 1.28–1.32. cgroups v2 behavior noted where it differs from v1. VPA examples use autoscaling.k8s.io/v1 API (VPA 0.14+).