Your pods are pending. Your on-call engineer is getting paged. Somewhere in the chain between “I need more compute” and “compute is available,” something is too slow. That something is almost always node provisioning — and the tool you chose to manage it determines whether that delay is 4 minutes or 45 seconds.

Node autoscaling is one of those infrastructure decisions that looks simple until you’re running it in production. Two schedulable pods sitting in Pending state doesn’t just mean a delayed deployment — it means latency spikes, dropped traffic, breached SLOs, and engineers debugging things that should have been invisible. At scale, it also means either burning money on over-provisioned nodes or gambling on under-provisioning at the worst possible moment.

Cluster Autoscaler (CA) has been the default answer for years. Karpenter emerged from AWS in 2021, graduated to stable in 2023, and by 2025 had become the default recommendation for most AWS-native clusters. In 2026, both tools are mature, widely deployed, and genuinely good — but they solve the problem differently, and picking the wrong one for your environment has real consequences.

This article is a deep technical comparison. It assumes you already know what Kubernetes is and have opinions about infrastructure. The goal is to give you a clear picture of how each tool works, where each one wins, and a decision framework you can actually use.

Why Node Autoscaling Is Hard

The fundamental tension in autoscaling is this: you want compute available before you need it, but you don’t want to pay for compute you’re not using. These goals are in direct conflict, and every autoscaling system is an attempt to find the least-bad trade-off.

Without autoscaling, you’re doing one of two things:

- Over-provisioning — you run enough nodes to handle peak load at all times. Your average utilization sits at 20–30%, and you’re paying for the other 70–80% to sit idle.

- Under-provisioning — you run lean, and when traffic spikes, pods go Pending. Your SLOs breach. You get paged at 3am to manually scale.

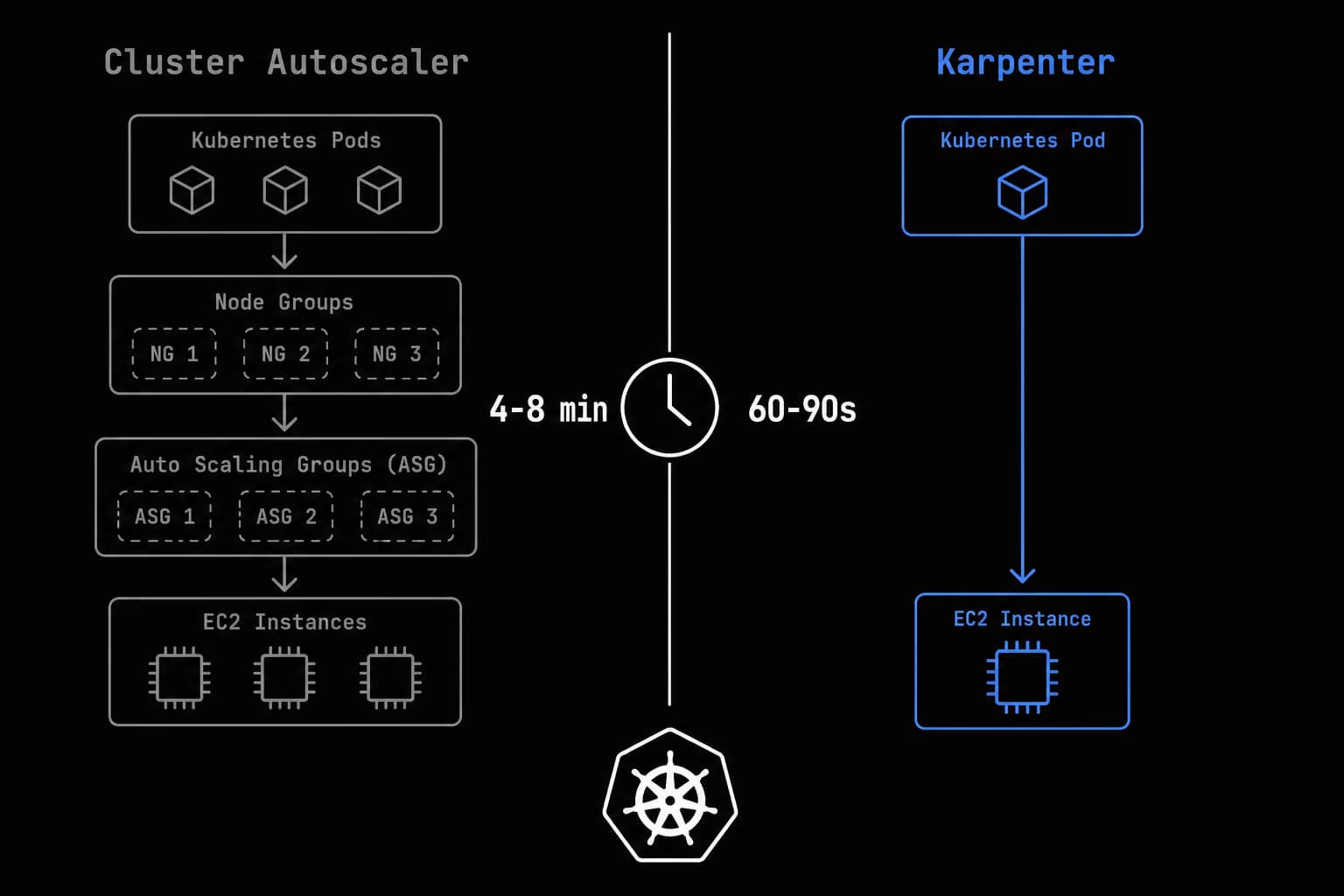

A common failure mode with poorly tuned autoscaling is the “thundering herd at scale-up” pattern: HPA creates new pods faster than node autoscaling can provision capacity. The provisioning window matters. With CA and typical ASG-backed node groups on AWS, you’re looking at 4–8 minutes. With Karpenter, 60–90 seconds. At 100 RPS and a 3-minute window, that’s 18,000 requests under degraded conditions.

Cluster Autoscaler: How It Actually Works

Cluster Autoscaler is a Kubernetes-native project under the kubernetes/autoscaler repository, in production since 2016, supporting AWS, GCP, Azure, Alibaba, DigitalOcean, and more.

The Node Group Model

CA operates on node groups — ASGs on AWS, MIGs on GCP, VMSSs on Azure. CA’s job is to decide when to increase or decrease the desired capacity of these groups. CA does not provision individual nodes. It scales node groups, and the node group provisions nodes. This indirection adds latency and reduces flexibility.

Scale-Up: Detecting Unschedulable Pods

CA runs a control loop (default scan interval: 10 seconds). For each Pending pod with PodScheduled=False, CA simulates adding a node of each known node group type and checks if the pod would become schedulable. When a node group is selected, CA applies an expander to choose which group to scale:

least-waste— minimizes CPU/memory waste after scheduling (best default for cost)most-pods— maximizes pods scheduled per scale-up operationpriority— lets you define ordering via ConfigMapgrpc— delegates to an external gRPC service

# Cluster Autoscaler deployment — AWS, production-tuned

apiVersion: apps/v1

kind: Deployment

metadata:

name: cluster-autoscaler

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: cluster-autoscaler

template:

metadata:

labels:

app: cluster-autoscaler

annotations:

cluster-autoscaler.kubernetes.io/safe-to-evict: "false"

spec:

priorityClassName: system-cluster-critical

serviceAccountName: cluster-autoscaler

containers:

- image: registry.k8s.io/autoscaling/cluster-autoscaler:v1.30.3

name: cluster-autoscaler

resources:

requests:

cpu: 100m

memory: 600Mi

limits:

cpu: 200m

memory: 1Gi

command:

- ./cluster-autoscaler

- --cloud-provider=aws

- --node-group-auto-discovery=asg:tag=k8s.io/cluster-autoscaler/enabled,k8s.io/cluster-autoscaler/my-cluster

- --expander=least-waste

- --balance-similar-node-groups=true

- --scale-down-delay-after-add=10m

- --scale-down-unneeded-time=10m

- --scale-down-utilization-threshold=0.5

- --max-graceful-termination-sec=600

- --scan-interval=10s

Scale-Down: The Conservative Approach

A node is a scale-down candidate only if:

– CPU and memory utilization (by requests) is below threshold (default: 50%)

– All pods could be rescheduled elsewhere

– No pod has cluster-autoscaler.kubernetes.io/safe-to-evict: "false"

– The node has been underutilized for at least --scale-down-unneeded-time (default: 10m)

This conservatism prevents churn — a feature, not a bug.

Karpenter: How It Actually Works

Karpenter is a CNCF incubating project originally built by AWS, donated to CNCF in 2023, GA (v1.0) in mid-2024. Providers exist for AWS (stable), Azure (stable), and GCP (beta).

The Core Insight: Bypass the Node Group

Karpenter calls the EC2 RunInstances API directly — no ASG involvement. This means:

– Any instance type in a single request, without pre-configuring a node group

– No intermediary: Karpenter → EC2 API → node joins cluster

– Right-size nodes to exactly what workloads need, across the full instance catalog

– Karpenter handles full node lifecycle, including termination

NodePool and EC2NodeClass

# EC2NodeClass — cloud-specific parameters

apiVersion: karpenter.k8s.aws/v1

kind: EC2NodeClass

metadata:

name: default

spec:

amiSelectorTerms:

- alias: al2023@latest

role: "KarpenterNodeRole-my-cluster"

subnetSelectorTerms:

- tags:

karpenter.sh/discovery: "my-cluster"

securityGroupSelectorTerms:

- tags:

karpenter.sh/discovery: "my-cluster"

blockDeviceMappings:

- deviceName: /dev/xvda

ebs:

volumeSize: 50Gi

volumeType: gp3

encrypted: true

metadataOptions:

httpTokens: required

httpPutResponseHopLimit: 1

---

# NodePool — intent and constraints

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: general-purpose

spec:

template:

spec:

nodeClassRef:

group: karpenter.k8s.aws

kind: EC2NodeClass

name: default

requirements:

- key: karpenter.sh/capacity-type

operator: In

values: ["on-demand", "spot"]

- key: kubernetes.io/arch

operator: In

values: ["amd64", "arm64"]

- key: karpenter.k8s.aws/instance-category

operator: In

values: ["c", "m", "r"]

- key: karpenter.k8s.aws/instance-generation

operator: Gt

values: ["5"]

- key: karpenter.k8s.aws/instance-size

operator: NotIn

values: ["nano", "micro", "small", "medium", "large"]

expireAfter: 720h

limits:

cpu: "1000"

memory: 1000Gi

disruption:

consolidationPolicy: WhenEmptyOrUnderutilized

consolidateAfter: 5m

budgets:

- nodes: "5%"

schedule: "0 8 * * mon-fri"

duration: 10h

- nodes: "25%"

Just-in-Time Provisioning and Bin Packing

When pods go Pending, Karpenter watches the event (not polls) and immediately:

1. Collects all Pending pods

2. Simulates bin packing — fewest possible nodes across the full instance catalog

3. Selects instances that satisfy all pod requirements

4. Calls EC2 API to provision the optimal instance(s)

Disruption and Consolidation

Karpenter’s differentiated value: active cluster consolidation. It evaluates whether nodes can be removed by redistributing pods onto others, or replaced with a smaller instance type. A c5.4xlarge running 4 vCPU worth of pods gets replaced with a c5.xlarge. Teams commonly report 30–50% compute cost reduction.

consolidationPolicy options:

– WhenEmpty — only remove nodes with no workload pods (safest)

– WhenEmptyOrUnderutilized — also replace underutilized nodes with smaller ones

Architecture Comparison

| Dimension | Cluster Autoscaler | Karpenter |

|---|---|---|

| Node provisioning model | Scales node groups (ASG/MIG/VMSS) | Direct cloud API, no node groups |

| Instance flexibility | Pre-defined node group types | Full instance catalog at runtime |

| Scale-up trigger | Polling (10s scan interval) | Watch-based event (near-instant) |

| Scale-down | Removes underutilized nodes | Removes + consolidates + right-sizes |

| Spot handling | Via ASG + AWS Node Termination Handler | Native, first-class, no NTH needed |

| Configuration model | Deployment flags | Declarative CRDs |

| Cloud support | All major + on-prem | AWS (GA), Azure (stable), GCP (beta) |

| Consolidation | No | Yes |

| Community maturity | Very mature (since 2016) | Mature (GA 2024) |

Scaling Speed: The Numbers

Cluster Autoscaler on AWS (typical):

1. Pod Pending → CA scan detects (0–10s)

2. ASG UpdateAutoScalingGroup API call (~15–30s)

3. EC2 instance starts (1–2 min)

4. Node bootstrap + kubelet registration (30–60s)

5. Pod scheduled (5–10s)

Total: 3–6 minutes (up to 12 min during high-demand periods)

Karpenter on AWS (typical):

1. Pod Pending → watch event fires (~1s)

2. EC2 RunInstances API call (~2–3s)

3. EC2 instance starts (same hardware — 1–2 min)

4. Node bootstraps + Ready (30–60s)

5. Pod scheduled (~5s)

Total: 90 seconds to 3 minutes

Cost Optimization: Where Karpenter Pulls Ahead

Right-sizing: CA requires pre-defined node groups. Karpenter selects the minimum viable instance for the pending workload from the full catalog.

Consolidation vs scale-down: CA removes underutilized nodes. Karpenter replaces a large underutilized node with a smaller one that still fits all pods. This produces compounding savings over time.

Spot handling: Karpenter receives EC2 interruption notices, pre-provisions a replacement, and drains the node — all within the 2-minute window. No AWS Node Termination Handler required. It also diversifies spot requests across instance types automatically to reduce simultaneous interruption risk.

Multi-Cloud Support in 2026

| Cloud | Cluster Autoscaler | Karpenter |

|---|---|---|

| AWS | ✅ Production-stable | ✅ Production-stable (reference impl.) |

| GCP | ✅ Production-stable | ⚠️ Beta (karpenter-provider-gcp) |

| Azure | ✅ Production-stable | ✅ Stable (karpenter-provider-azure) |

| Alibaba | ✅ Supported | ❌ No provider |

| DigitalOcean | ✅ Supported | ❌ No provider |

| On-premises / Cluster API | ✅ Supported | ❌ Not supported |

When Cluster Autoscaler Is Still the Right Choice

- Non-AWS environments — GCP, Alibaba, DigitalOcean, on-prem with Cluster API

- Existing node group architecture — significant investment in ASG design, compliance tooling

- Regulatory constraints — some frameworks require ASG-backed provisioning audit trails

- Cluster API / bare metal — CA is the only mature option

- Team familiarity and working-well CA deployment — migration cost may not justify benefit

When Karpenter Is the Right Choice

- AWS-native, cost optimization priority — right-sizing + consolidation = meaningful cost reduction

- Diverse and variable workloads — batch, spot, GPU, stateless APIs — Karpenter handles all with a few NodePools

- Spot-heavy clusters — native interruption handling, diversification, no NTH

- Declarative infrastructure-as-code culture — NodePools version cleanly in Git

- Low-latency scaling requirements — event-driven workloads, KEDA-triggered jobs, sharp traffic spikes

Running Both: Migration Path and Gotchas

Separating Responsibility

Use labels and taints to prevent CA and Karpenter from managing the same nodes:

# NodePool with taint — CA-managed pods won't tolerate this

spec:

template:

metadata:

labels:

provisioner: karpenter

spec:

taints:

- key: karpenter.sh/provisioned

value: "true"

effect: NoSchedule

Gradual Migration

- Phase 1 — Karpenter manages spot/batch workloads. CA manages on-demand production nodes.

- Phase 2 — Migrate spot workloads fully. Remove AWS NTH.

- Phase 3 — Migrate on-demand. Reduce CA node group capacity gradually.

- Phase 4 — Decommission CA once all groups are empty.

Key Gotchas

- Karpenter consolidation + permissive PDBs —

maxUnavailable: 100%will cause disruptive consolidation. Audit PDBs before enablingWhenEmptyOrUnderutilized. - NodePool limits are hard stops — pods go Pending indefinitely at limit. Monitor utilization.

- AMI drift —

@latestalias picks up new AMIs on new nodes. Consider pinning for strict change control. - Simultaneous scale-down conflicts — use strict label/taint segregation during migration.

Decision Framework

| Factor | Cluster Autoscaler | Karpenter |

|---|---|---|

| Cloud support | All clouds + on-prem | AWS (GA), Azure (stable), GCP (beta) |

| Provisioning speed | 4–8 minutes | 60–120 seconds |

| Instance flexibility | Node group pre-config required | Full catalog, runtime selection |

| Cost optimization | Scale-down only | Scale-down + consolidation + right-sizing |

| Spot integration | Via ASG + NTH | Native, first-class |

| Operational complexity | Lower | Moderate |

| Cluster API / bare metal | Yes | No |

| Consolidation | No | Yes |

Running on AWS?

├── No → Azure? → Karpenter (stable) or CA

│ GCP? → CA or GKE NAP (preferred)

│ Other → Cluster Autoscaler

│

└── Yes → Hard regulatory constraints on non-ASG provisioning?

├── Yes → Cluster Autoscaler

└── No → Cost optimization priority or diverse workloads?

├── Yes → Karpenter

└── No → Either (flip for team preference)

FAQ

Is Karpenter a drop-in replacement for Cluster Autoscaler?

No. Different configuration model, different concepts. Migration requires re-expressing node group config as NodePools/NodeClasses, auditing PDBs, and running both in parallel. Budget at least a sprint for a medium-sized cluster.

Can I run Karpenter on self-managed Kubernetes (not EKS)?

Yes, but non-trivial. Karpenter requires IAM credentials (IRSA or equivalent) to call EC2 APIs. On self-managed clusters, this requires more setup than on EKS where IRSA is built-in.

How does Karpenter interact with HPA and VPA?

No conflict. HPA creates pods → pods go Pending if insufficient nodes → Karpenter provisions nodes → pods scheduled. VPA adjusts pod resource requests, which Karpenter uses as inputs for bin packing.

What happens when Karpenter itself goes down?

Existing nodes and pods continue normally. New pods requiring provisioning go Pending until Karpenter recovers. Scale-down and consolidation pause. Deploy multiple replicas with leader election for production.

Does Karpenter support GPU nodes?

Yes. GPU instance types (p3, p4, g4, g5) can be included in NodePool requirements. Create dedicated NodePools with appropriate taints for GPU-requesting pods.

How does Karpenter handle AMI updates?

The expireAfter field forces node rotation. When a node expires, Karpenter pre-provisions a replacement with the latest AMI per EC2NodeClass, then drains and terminates the old node — a rolling AMI update mechanism without additional tooling.

Is Cluster Autoscaler still actively maintained?

Yes. CA remains under active development in kubernetes/autoscaler, with releases tracking Kubernetes minor versions. It is not being deprecated. For non-AWS environments and working CA deployments, it remains a fully supported and rational choice.

Tested against Kubernetes 1.28–1.32. Karpenter v1.x API (GA). CA v1.30.x. AWS provider examples; Azure and GCP provider details may differ.