Deployment Models for a Scalable Log Aggregation Architecture using Loki

Deploy a scalable Loki is not an straightforward task. We already have talked about Loki in previous posts on the site, and it is becoming more and more popular, and usage becomes much more regular each day. That is why I think it makes sense to include another post regarding Loki Architecture.

Loki has several advantages that promote it as a default choice to deploy a Log Aggregation Stack. One of them is its scalability because you can see across different deployment models how many components you like to deploy and their responsibilities. So the target of the topic is to show you how to deploy an scalable Loki solution and this is based on two concepts: components available and how you group them.

So we will start with the different components:

- ingester: responsible for writing log data to long-term storage backends (DynamoDB, S3, Cassandra, etc.) on the write path and returning log data for in-memory queries on the read path.

- distributor: responsible for handling incoming streams by clients. It’s the first step in the write path for log data.

- query-frontend: optional service providing the querier’s API endpoints and can be used to accelerate the read path

- querier: service handles queries using the LogQL query language, fetching logs from the ingesters and long-term storage.

- ruler: responsible for continually evaluating a set of configurable queries and performing an action based on the result.

Then you can join them into different groups, and depending on the size of these groups, you have a different deployment topology, as shown below:

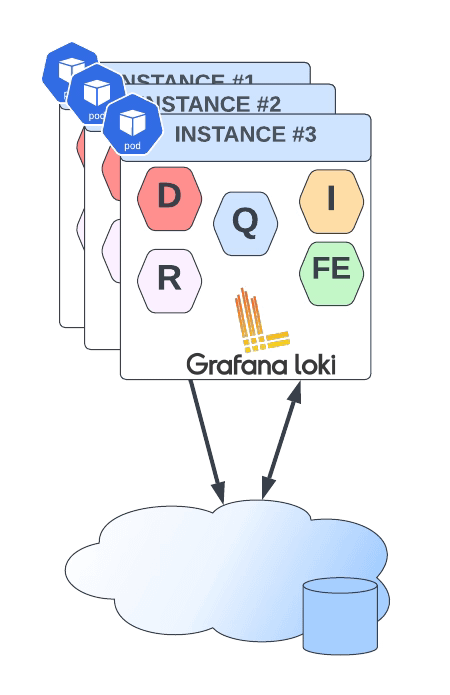

- Monolith: As you can imagine, all components are running together in a single instance. This is the simplest option and is recommended as a 100 GB / day starting point. You can even scale this deployment, but it will scale all components simultaneously, and it should have a shared object state.

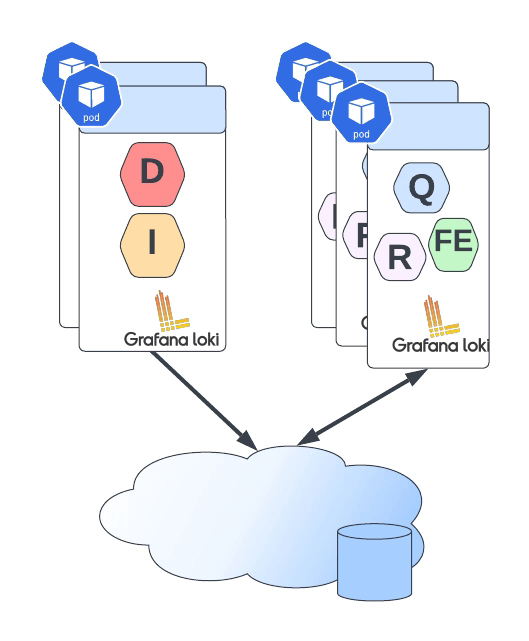

- Simple Scalable Deployment Model: This is the second level, and it can scale up at several TB of logs per day. It consists of splitting the components into two different profiles: read and write.

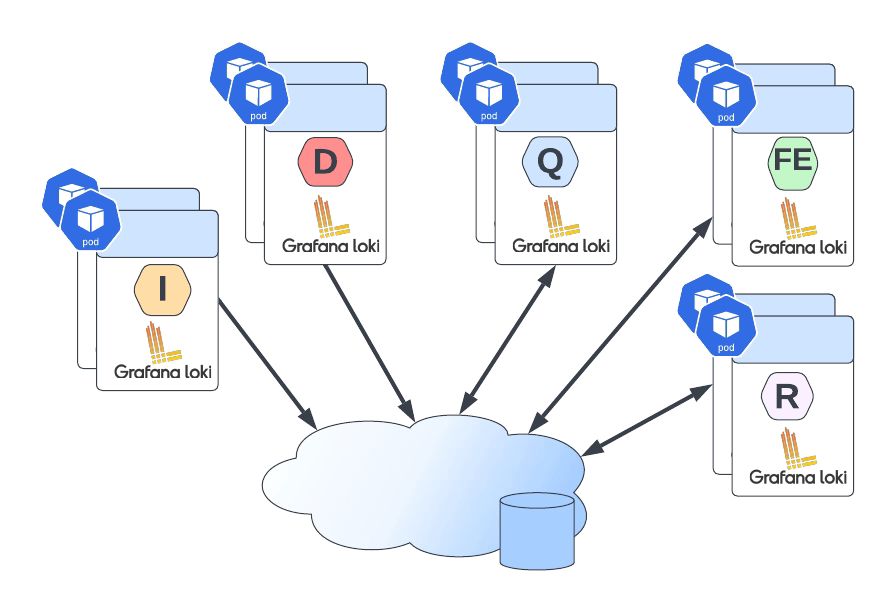

- Microservices: That means that each component will be managed independently, giving you all the power at your hand to scale each of these components alone.

Defining the deployment model of each instance is very easy, and it is based on a single parameter named target. So depending on the value of the target it will follow one of the previous deployment models:

- all (default): It will deploy as in monolith mode.

- write: It will be the write path on the simple scalable deployment model

- read: It will be the reading group on the simple, scalable deployment model

- ingester, distributor, query-frontend, query-scheduler, querier, index-gateway, ruler, compactor: Individual values to deploy a single component for the microservice deployment model.

The target argument will help for an on-premises kind of deployment. Still, if you are using Helm for the installation, Loki already provides different helm charts for the other deployment models:

- Helm Chart for Simple Scalable Model: https://github.com/grafana/helm-charts/tree/main/charts/loki-simple-scalable

- Helm Chart for Microservice Model: https://github.com/grafana/helm-charts/tree/main/charts/loki-distributed

- Helm Chart for Monolith Model: https://github.com/grafana/helm-charts/tree/main/charts/loki

But all those helm charts are based on the same principle commented above on defining the role of each instance using the argument target, as you can see in the picture below:

📚 Want to dive deeper into Kubernetes? This article is part of our comprehensive Kubernetes Architecture Patterns guide, where you’ll find all fundamental and advanced concepts explained step by step.