Discover new options that you have at your disposal to do efficient disk usage in your Docker installation

The rise of the container has been a game-changer for all of us, not only at the server-side where pretty much any new workload that we deploy is deployed in a container form but also in our local environments happens same change.

We embrace containers to easily manage the different dependencies that we need to handle as developers. Even if the task at hand was not a container-related thing. Do you need a Database up & running? You use a containerized version of it. Do you need a Messaging System to test some of your applications? You quickly start a container providing that functionality.

And as soon as you don’t need them, those are killed, and your system is still as clean as it was before starting this task. But there are always things that we need to handle even when we have a wonderful solution in front of us, and in the case of a local docker environment, Disk Usage is one of the most critical ones.

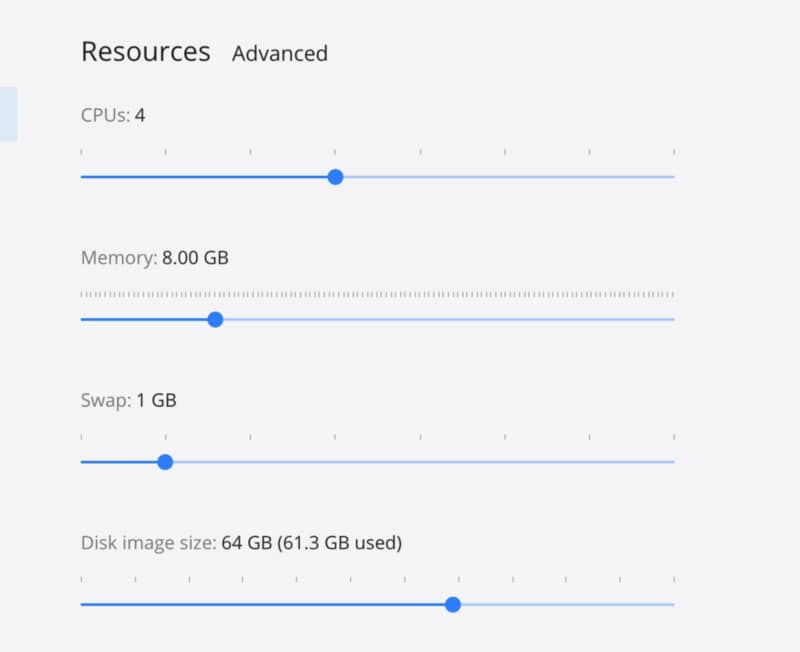

This process of launching new things over and over and then we get rid of them is true in some way because all these images that we have needed and all these containers we have launched are still there in our system waiting for a new round and during that time using our disk resources as you can see in a current picture of my local Docker environment with more than 60 GB used for that purpose.

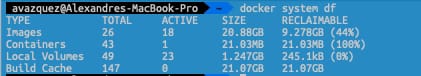

The first thing we need to do is to check what is using this amount of space to see if we can release some of them. To do that, we can leverage on the docker system df command the docker CLI provides to us:

As you can see, the 61 GB that is in use are 20.88 GB per the images that I have in use, 21.03 MB just for the containers that I have defined, 1.25 GB for the local volumes 21.07 for the build cache. As I only have active 18 of the 26 images defined I can reclaim up to 9.3 GB that is an important amount.

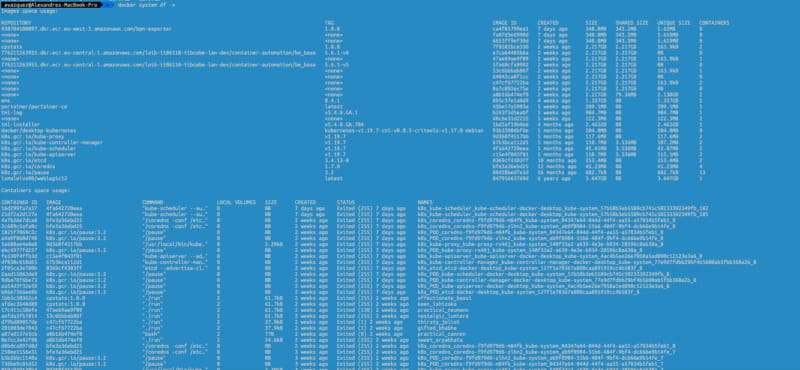

If we would like to get more details about this data, we can always use the verbose option as an append to the command, as you can see in the picture below:

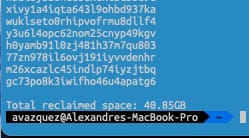

So, after getting all this information, we can go ahead and execute a prune of your system. This activity will get rid of any unused container and image that you have in your system, and to execute that, you only need to type this:

docker system prune -af

It has several options to turn a little bit the execution that you can check on the Docker Oficial web page :

In my case, that help me to recover up to 40.8 GB of my system, as you can see in the picture below.

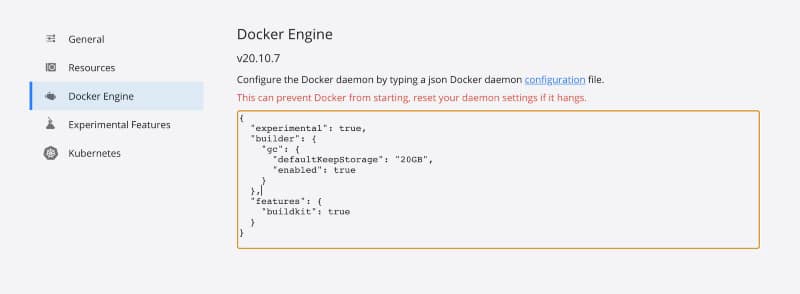

But if you would like to move one step ahead, you can also tune some properties to consider where you are executing this prune. For example, the defaultKeepStorage will help you define how much disk you want to use for this build-cache system to optimize the amount of network usage you do when building images with common layers.

To do that, you need to have the following snippet in your Docker Engine configuration section, as shown in the image below:

I hope that all this housekeeping process will help your location environments to shine again and get the most of it without needing to waste a lot of resources in the process