ArgoCD has become the de facto standard for GitOps-based continuous delivery in Kubernetes. If you are running production workloads on Kubernetes and still deploying with raw kubectl apply or untracked Helm releases, ArgoCD solves a class of problems you may not even know you have yet. This guide covers everything from core concepts to production-grade configuration.

The Problem ArgoCD Solves

Traditional CI/CD pushes deployments into a cluster. A CI system runs tests, builds an image, and then executes kubectl apply or helm upgrade against the cluster. This model has several structural problems:

- Drift goes undetected. Someone applies a hotfix directly to the cluster. Now your Git repository no longer reflects reality, and nobody knows it.

- No single source of truth. The cluster state is authoritative, not Git. Your desired state and actual state can diverge silently.

- Rollback is painful. Rolling back a bad deployment means re-running old CI pipelines or manually reversing changes, neither of which is fast.

- Multi-cluster management compounds the problem. Each cluster becomes a snowflake with its own history of undocumented changes.

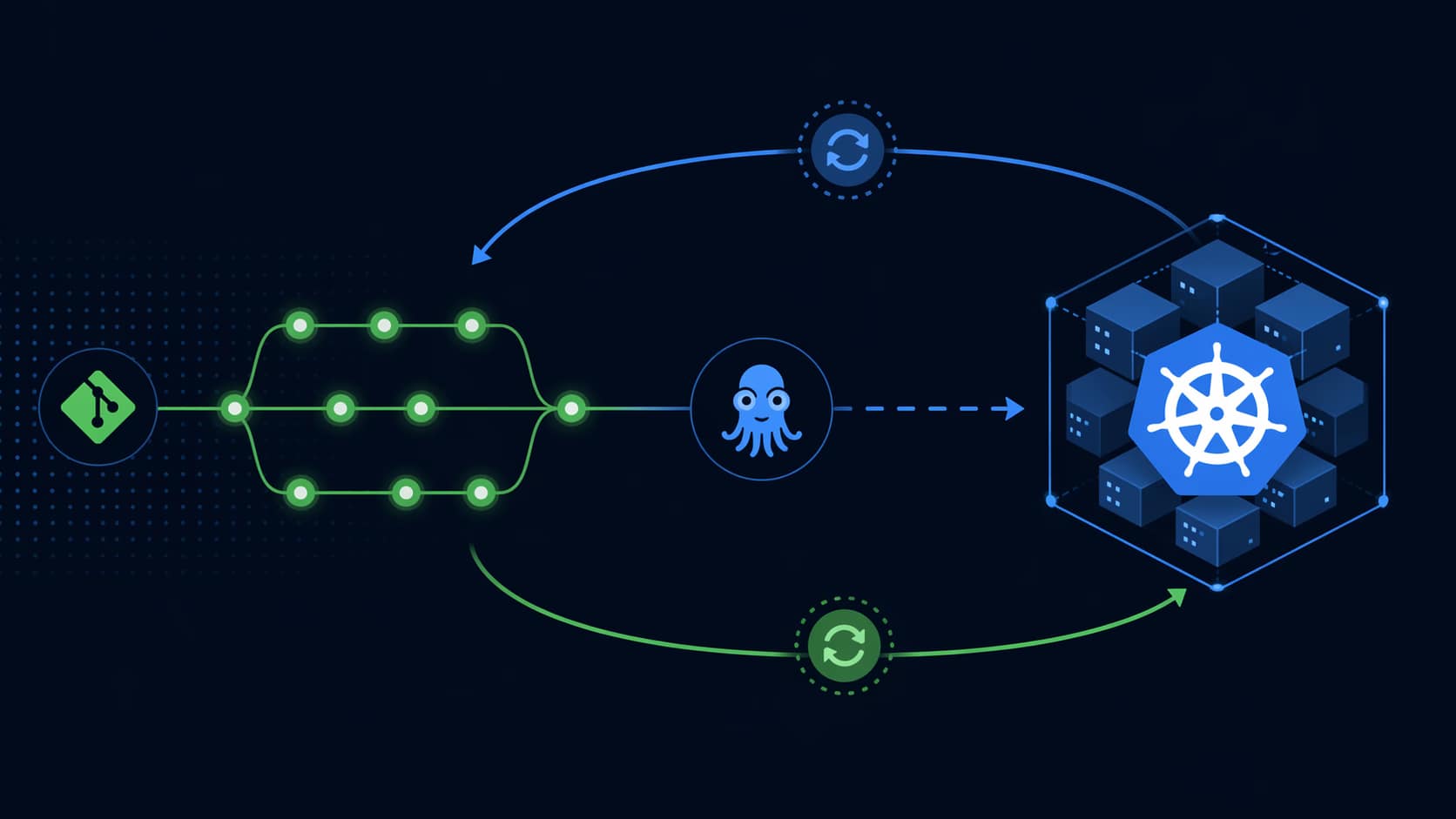

GitOps inverts this model. Git is the source of truth. The cluster pulls its desired state from Git and continuously reconciles toward it. ArgoCD is the most mature GitOps operator for Kubernetes, implementing this pull-based model with a production-ready feature set.

How ArgoCD Works: Core Architecture

ArgoCD runs as a set of controllers inside your Kubernetes cluster. The core components are:

- Application Controller — Watches both the Git repository and the live cluster state. Computes the diff and drives reconciliation.

- API Server — Exposes the gRPC/REST API consumed by the CLI, UI, and external systems.

- Repository Server — Generates Kubernetes manifests from source (Helm, Kustomize, plain YAML, Jsonnet).

- Redis — Caches cluster state and repository data to reduce API server load.

- Dex (optional) — Provides OIDC authentication for SSO integration.

The fundamental unit in ArgoCD is an Application — a CRD that maps a source (a path in a Git repo at a specific revision) to a destination (a namespace in a cluster). ArgoCD continuously compares the desired state from Git with the live state in the cluster and reports on the sync status.

Sync Status vs Health Status

Two orthogonal concepts you need to understand from day one:

- Sync Status — Does the live state match what Git says it should be? Values: Synced, OutOfSync, Unknown.

- Health Status — Is the application actually working? Values: Healthy, Progressing, Degraded, Suspended, Missing, Unknown.

An application can be Synced but Degraded — the manifests were applied correctly, but a pod is crash-looping. Conversely, it can be OutOfSync but Healthy — someone applied a change directly to the cluster outside of Git.

Installing ArgoCD

The official installation method uses a single manifest. For production, always pin to a specific version:

kubectl create namespace argocd

kubectl apply -n argocd -f https://raw.githubusercontent.com/argoproj/argo-cd/v2.11.0/manifests/install.yamlThis deploys ArgoCD in the argocd namespace with full cluster-admin access. For a production HA setup, use the manifests/ha/install.yaml variant, which deploys multiple replicas of the API server and application controller.

Accessing the UI and CLI

The initial admin password is auto-generated and stored in a secret:

argocd admin initial-password -n argocdFor local access, port-forward the API server:

kubectl port-forward svc/argocd-server -n argocd 8080:443Then log in via the CLI:

argocd login localhost:8080 --username admin --password <password> --insecureFor production, expose the ArgoCD server via an Ingress or LoadBalancer with a proper TLS certificate. If you’re using NGINX Ingress Controller:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: argocd-server-ingress

namespace: argocd

annotations:

nginx.ingress.kubernetes.io/ssl-passthrough: "true"

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

spec:

ingressClassName: nginx

rules:

- host: argocd.yourdomain.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: argocd-server

port:

number: 443Defining Your First Application

Applications can be created via the UI, the CLI, or declaratively with a YAML manifest. The declarative approach is the recommended one — it means your ArgoCD configuration itself is in Git:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: my-app

namespace: argocd

spec:

project: default

source:

repoURL: https://github.com/your-org/your-app

targetRevision: HEAD

path: k8s/overlays/production

destination:

server: https://kubernetes.default.svc

namespace: production

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=trueKey fields to understand:

- targetRevision — Can be a branch name, tag, or commit SHA. For production, pin to a tag rather than

HEAD. - path — The directory within the repo containing your Kubernetes manifests.

- automated.prune — Automatically delete resources that are no longer in Git. Required for true GitOps but use carefully — it will delete things.

- automated.selfHeal — Automatically revert manual changes made directly to the cluster. This is what enforces Git as the single source of truth.

Helm Integration

ArgoCD has native Helm support. It can deploy Helm charts directly from chart repositories or from your Git repository. You can override values per environment:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: prometheus-stack

namespace: argocd

spec:

project: default

source:

repoURL: https://prometheus-community.github.io/helm-charts

chart: kube-prometheus-stack

targetRevision: 58.4.0

helm:

releaseName: prometheus-stack

valuesObject:

grafana:

adminPassword: "${GRAFANA_PASSWORD}"

prometheus:

prometheusSpec:

retention: 30d

storageSpec:

volumeClaimTemplate:

spec:

storageClassName: fast-ssd

resources:

requests:

storage: 50Gi

destination:

server: https://kubernetes.default.svc

namespace: observabilityOne important nuance: ArgoCD renders Helm charts server-side using its own templating engine, not helm install. This means Helm hooks (pre-install, post-upgrade, etc.) are supported, but the release is not tracked in Helm’s release history. Running helm list will not show ArgoCD-managed releases unless you configure ArgoCD to use the Helm secrets backend.

Projects: Multi-Tenancy and Access Control

ArgoCD Projects provide multi-tenancy within a single ArgoCD instance. They let you restrict which source repositories, destination clusters, and namespaces a team can deploy to. Every Application belongs to a Project.

apiVersion: argoproj.io/v1alpha1

kind: AppProject

metadata:

name: platform-team

namespace: argocd

spec:

description: Platform team applications

sourceRepos:

- 'https://github.com/your-org/*'

destinations:

- namespace: 'platform-*'

server: https://kubernetes.default.svc

clusterResourceWhitelist:

- group: ''

kind: Namespace

namespaceResourceBlacklist:

- group: ''

kind: ResourceQuotaProjects are where you define the boundaries of what each team can do. The default project has no restrictions — never use it for production workloads. Create dedicated projects per team or per environment.

RBAC Configuration

ArgoCD has its own RBAC system layered on top of Kubernetes RBAC. It is configured via the argocd-rbac-cm ConfigMap. Roles are defined per project or globally:

apiVersion: v1

kind: ConfigMap

metadata:

name: argocd-rbac-cm

namespace: argocd

data:

policy.default: role:readonly

policy.csv: |

# Platform team has full access to platform-team project

p, role:platform-admin, applications, *, platform-team/*, allow

p, role:platform-admin, projects, get, platform-team, allow

p, role:platform-admin, repositories, *, *, allow

# Dev team can sync but not delete

p, role:developer, applications, get, */*, allow

p, role:developer, applications, sync, */*, allow

p, role:developer, applications, action/*, */*, allow

# Bind SSO groups to roles

g, your-org:platform-team, role:platform-admin

g, your-org:developers, role:developerThe policy.default: role:readonly ensures that any authenticated user who has no explicit role assignment gets read-only access — a safe default for production.

Multi-Cluster Management

ArgoCD can manage multiple Kubernetes clusters from a single control plane. Register external clusters with the CLI:

# First, ensure the target cluster context is in your kubeconfig

argocd cluster add production-eu-west --name production-eu-west

# Verify registration

argocd cluster listArgoCD will create a ServiceAccount in the target cluster and store its credentials as a Kubernetes secret in the ArgoCD namespace. Applications can then target this cluster by name in their destination.server field.

For large-scale multi-cluster setups, consider the App of Apps pattern or ApplicationSets. ApplicationSets are a controller that generates Applications dynamically based on generators — cluster lists, Git directory structures, or matrix combinations:

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: cluster-addons

namespace: argocd

spec:

generators:

- clusters:

selector:

matchLabels:

environment: production

template:

metadata:

name: '{{name}}-addons'

spec:

project: platform

source:

repoURL: https://github.com/your-org/cluster-addons

targetRevision: HEAD

path: 'addons/{{metadata.labels.region}}'

destination:

server: '{{server}}'

namespace: kube-systemThis single ApplicationSet deploys the appropriate addons to every cluster labeled environment: production, using each cluster’s region label to select the correct path in the repository.

Sync Strategies and Waves

When deploying complex applications with dependencies between resources, you need to control the order of deployment. ArgoCD provides two mechanisms:

Sync Phases

Resources are deployed in three phases: PreSync, Sync, and PostSync. Use Sync Hooks for resources that must complete before the main sync proceeds (database migrations, certificate issuance, etc.):

apiVersion: batch/v1

kind: Job

metadata:

name: db-migration

annotations:

argocd.argoproj.io/hook: PreSync

argocd.argoproj.io/hook-delete-policy: HookSucceeded

spec:

template:

spec:

containers:

- name: migrate

image: your-app:v1.2.3

command: ["./migrate.sh"]

restartPolicy: NeverSync Waves

Within the Sync phase, waves control ordering. Resources with a lower wave number are applied and must become healthy before resources with higher wave numbers are applied:

# Applied first

metadata:

annotations:

argocd.argoproj.io/sync-wave: "1"

# Applied after wave 1 is healthy

metadata:

annotations:

argocd.argoproj.io/sync-wave: "2"Notifications and Alerting

ArgoCD Notifications is a standalone controller that sends alerts when Application state changes. It supports Slack, PagerDuty, GitHub commit status, email, and a dozen other providers. Configure it via the argocd-notifications-cm ConfigMap:

apiVersion: v1

kind: ConfigMap

metadata:

name: argocd-notifications-cm

namespace: argocd

data:

service.slack: |

token: $slack-token

template.app-sync-failed: |

slack:

attachments: |

[{

"title": "{{.app.metadata.name}}",

"color": "#E96D76",

"fields": [{

"title": "Sync Status",

"value": "{{.app.status.sync.status}}",

"short": true

},{

"title": "Message",

"value": "{{range .app.status.conditions}}{{.message}}{{end}}",

"short": false

}]

}]

trigger.on-sync-failed: |

- when: app.status.sync.status == 'Unknown'

send: [app-sync-failed]

- when: app.status.operationState.phase in ['Error', 'Failed']

send: [app-sync-failed]Secret Management with ArgoCD

ArgoCD intentionally has no secret management built in — storing secrets in Git as plain text is never acceptable. The common patterns are:

- Sealed Secrets (Bitnami) — Encrypts secrets with a cluster-specific key. The encrypted secret can be committed to Git; only the cluster can decrypt it.

- External Secrets Operator — Syncs secrets from Vault, AWS Secrets Manager, GCP Secret Manager, etc. into Kubernetes secrets. The ArgoCD Application manages the ExternalSecret CRD, not the actual secret value.

- argocd-vault-plugin — A plugin that replaces placeholder values in manifests with secrets retrieved from Vault at sync time.

The External Secrets Operator approach is the most flexible for teams already using a centralized secrets backend. The Application in ArgoCD deploys ExternalSecret objects, which the ESO controller resolves at runtime without ever touching Git.

Production Best Practices

- Run ArgoCD in HA mode. Use

manifests/ha/install.yamlwith 3 replicas of the API server and multiple application controller shards for large clusters (100+ applications). - Pin image versions. Never use

latestfor the ArgoCD image itself. Pin to a specific version and upgrade deliberately. - Use the App of Apps pattern for bootstrapping. A single root Application deploys all other Applications. This makes cluster bootstrapping idempotent and reproducible.

- Separate ArgoCD config from application config. Store ArgoCD Application manifests in a dedicated

gitopsrepository, separate from application source code. - Enable resource tracking via annotations. Use

application.resourceTrackingMethod: annotationinargocd-cminstead of the default label-based tracking, which can conflict with Helm’s own labels. - Set resource limits on ArgoCD controllers. Application controller CPU and memory scale with the number of resources tracked. Monitor and tune accordingly.

- Restrict auto-sync in production. Consider requiring manual sync approval for production environments even when using GitOps — or at minimum require a PR approval gate before changes reach the target branch.

ArgoCD vs Flux

Flux v2 is the other major GitOps operator. Both are CNCF projects. The main differences in practice:

| Feature | ArgoCD | Flux v2 |

|---|---|---|

| UI | Built-in web UI | No official UI (use Weave GitOps) |

| Multi-cluster | Single control plane manages many clusters | Agent per cluster, pull model |

| ApplicationSets | Native | Kustomization + HelmRelease |

| Secret management | Plugin-based | SOPS native integration |

| Learning curve | Steeper (more concepts) | Lower (Kubernetes-native CRDs) |

| CNCF status | Graduated | Graduated |

ArgoCD wins when you need the UI, multi-cluster management from a central plane, or have a large operations team that benefits from the visual application topology view. Flux wins when you want a simpler, purely Kubernetes-native approach with better SOPS integration for secret management.

FAQ

Can ArgoCD deploy to the cluster it runs in?

Yes. The https://kubernetes.default.svc destination refers to the local cluster. ArgoCD can manage both its own cluster and external clusters simultaneously.

Does ArgoCD support private Git repositories?

Yes. Configure repository credentials via argocd repo add with SSH keys, HTTPS username/password, or GitHub App credentials. Credentials are stored as Kubernetes secrets in the ArgoCD namespace.

How does ArgoCD handle CRD installation?

CRDs can be managed by ArgoCD, but there is a chicken-and-egg problem: if a CRD is not yet installed, ArgoCD cannot validate resources that use it. The recommended pattern is to put CRDs in wave 1 and dependent resources in wave 2, or to use a separate Application for CRDs.

What is the difference between an Application and an AppProject?

An Application is the unit of deployment — it maps a Git source to a cluster destination. An AppProject is a grouping and access control boundary — it restricts what sources and destinations an Application within the project can use. Every Application belongs to exactly one AppProject.

How do I roll back a deployment with ArgoCD?

The GitOps way: revert the commit in Git and let ArgoCD reconcile. ArgoCD also provides a UI-based rollback to any previous sync revision, but this is considered a temporary measure — the Git history should always be updated to match.

Getting Started

The fastest path from zero to a working ArgoCD setup on a local cluster:

# 1. Create a local cluster (kind or minikube)

kind create cluster --name argocd-demo

# 2. Install ArgoCD

kubectl create namespace argocd

kubectl apply -n argocd -f https://raw.githubusercontent.com/argoproj/argo-cd/stable/manifests/install.yaml

# 3. Wait for pods

kubectl wait --for=condition=Ready pods --all -n argocd --timeout=120s

# 4. Get the initial admin password

argocd admin initial-password -n argocd

# 5. Port-forward and log in

kubectl port-forward svc/argocd-server -n argocd 8080:443 &

argocd login localhost:8080 --username admin --insecure

# 6. Deploy your first application

argocd app create guestbook

--repo https://github.com/argoproj/argocd-example-apps.git

--path guestbook

--dest-server https://kubernetes.default.svc

--dest-namespace guestbook

--sync-policy automatedFrom here, the natural next steps are integrating ArgoCD with your existing CI pipeline (CI builds and pushes the image, updates the image tag in Git, ArgoCD detects the change and syncs), configuring SSO via Dex, and setting up the App of Apps pattern for managing multiple applications declaratively.

For teams looking to go deeper on GitOps and ArgoCD in production, the Kubernetes architecture patterns guide covers how ArgoCD fits into a broader platform engineering stack alongside service mesh, policy enforcement, and observability tooling.