Top 3 WebApps That I Use Daily as Software Architect to do my job in a better, more efficient way.

WebApps are part of our life and part of our creation and work process. Especially the ones that are working in the software industry pretty much each task that we need to accomplish you need to use a tool (if not more than one) as part of this process and there are tools that will help you to make this process smooth or easier.

I have a preference for the native/desktop apps probably because I am old enough to suffer the first age of the web apps that were a nightmare but things have changed a lot after all these years and now I have to admit that there are some that I use pretty much in my daily activities:

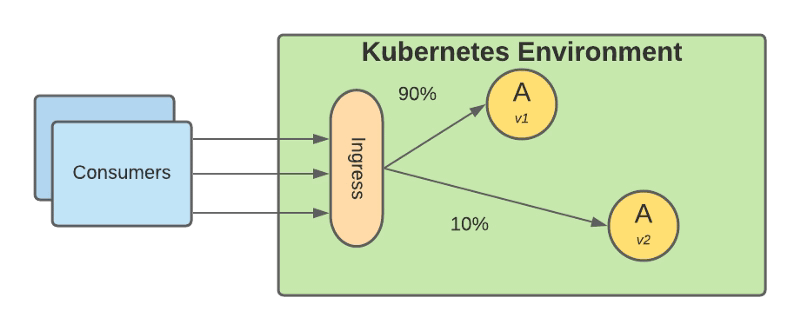

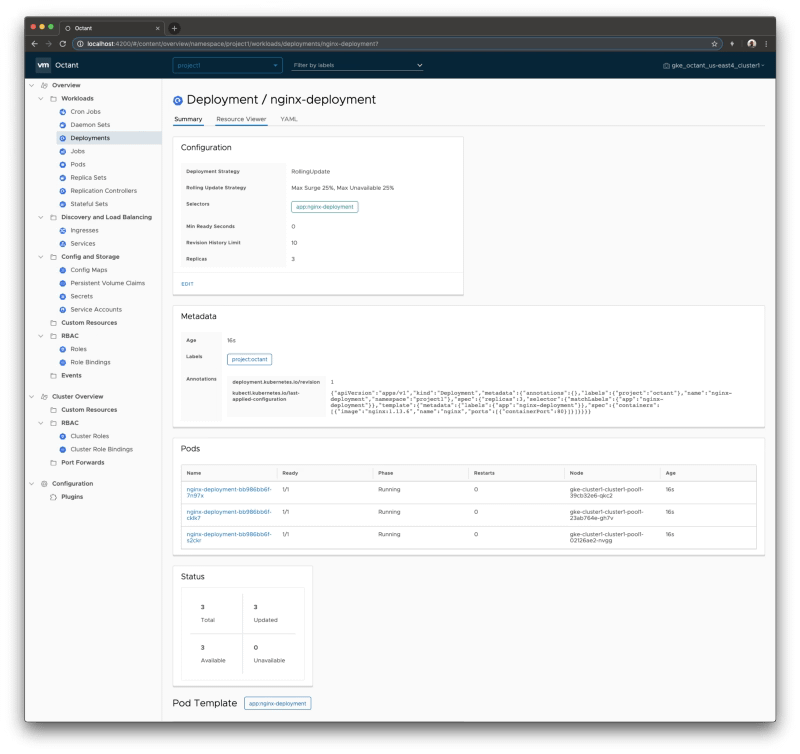

1.- Lucidchart: Your Diagram Tool

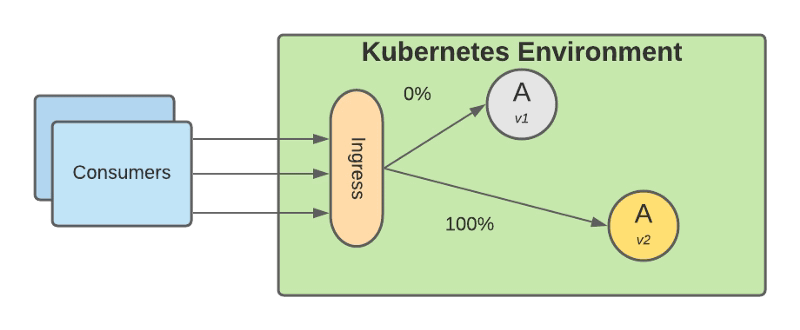

This is pretty much the only tool that I use to cover all my sketch needs as a Software Architect that are a lot. It compares to other native alternatives like Microsoft Visio but I like their focus on the software industry with a lot of shapes focus for modern architectures including the shapes for main cloud providers such as Microsoft Azure, Amazon Web Services, or Google Cloud.

In an easy way, you can create design diagrams, UML ones, or architecture diagrams with the look and feel of a professional. It has a free license for personal use but I encourage to jump into one of the pro plans especially if you are a software company. This is a very innovative company and not stopping at the diagram sector but also including things like Lucidspark to bring the visual thinking approach to the digital world in such an excellent manner. I have used other alternatives like draw.io or Google Shapes but Lucidchart works better for my creative process.

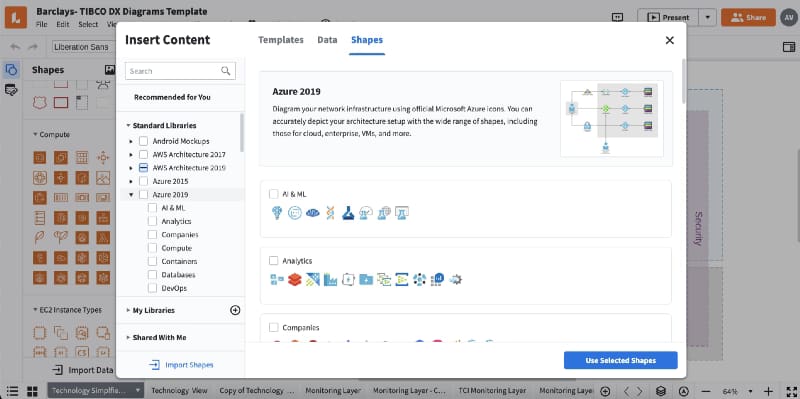

2.- regex101.com: Your RegExp Jedi Master Online

No matter what you do for a living, if you are a System Administrator or a Software Developer, if you are a Software Architect working at the high-level definition of architectures or just a pre-sales engineering you will need to provide some Regular Expression and for sure it will not be an easy one. So you need tools that help you in this process and this is what regexp101.com will provide to you.

A clean interface will provide an easy way to test your RE or fix it if needed at also a way to improve your theoretical knowledge of the ER providing you the way to express some of your ER in the most efficient way. For sure a must tool that you need to have in your bookmarks to optimize the time you need to create your tested RE and become an RE master

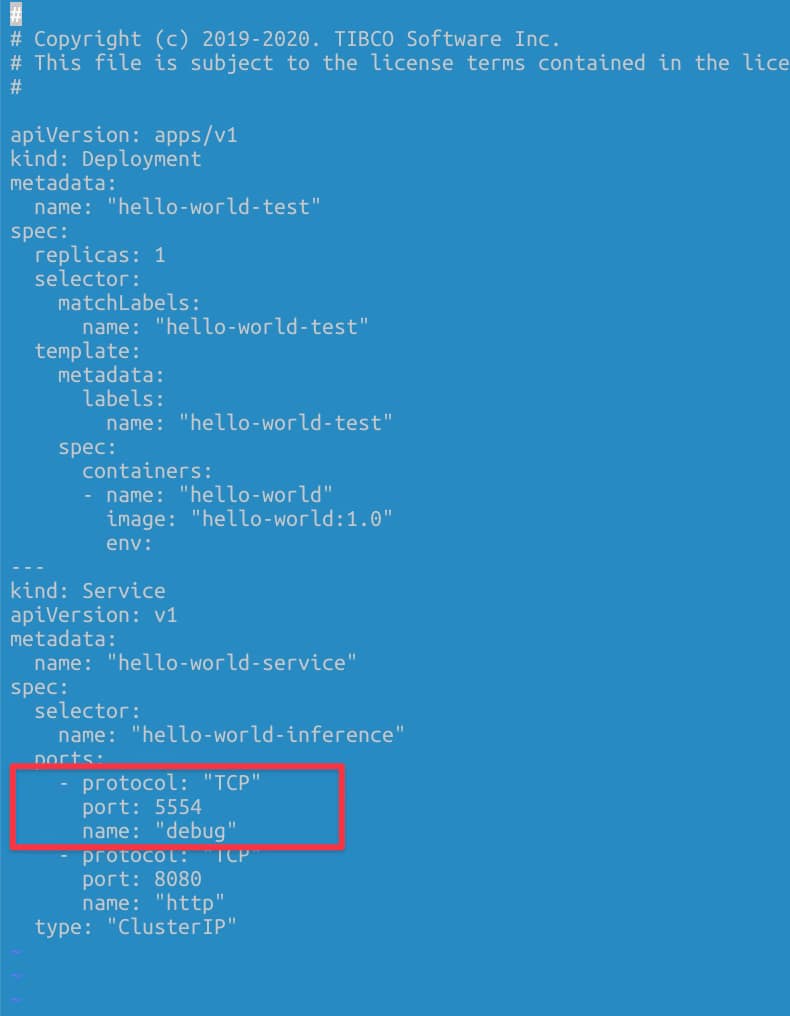

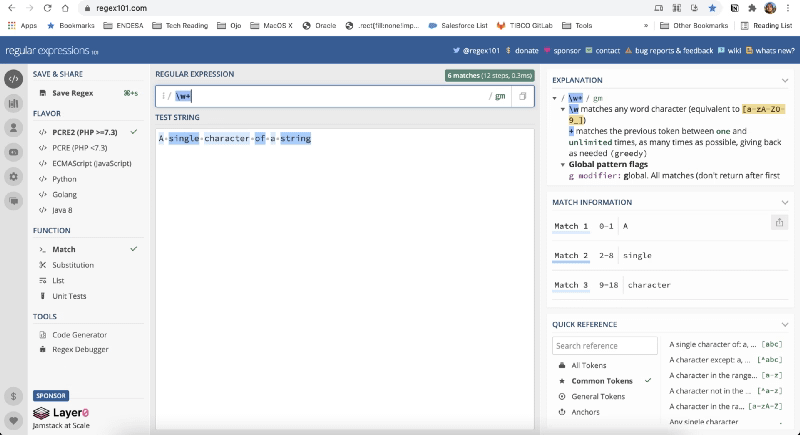

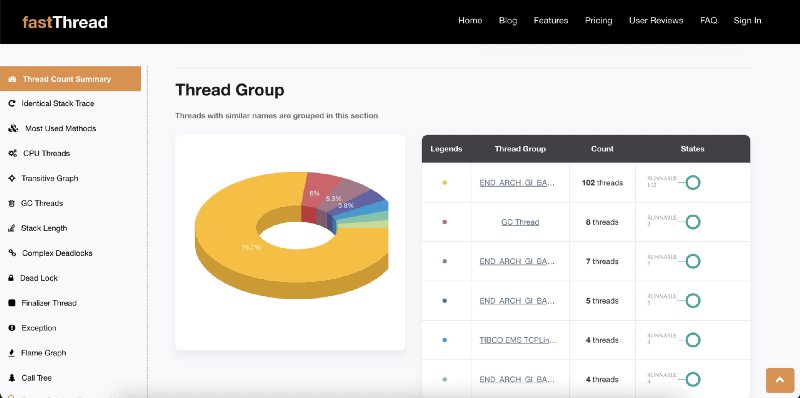

3.- fastthread.io: Your Java Wise Advisor

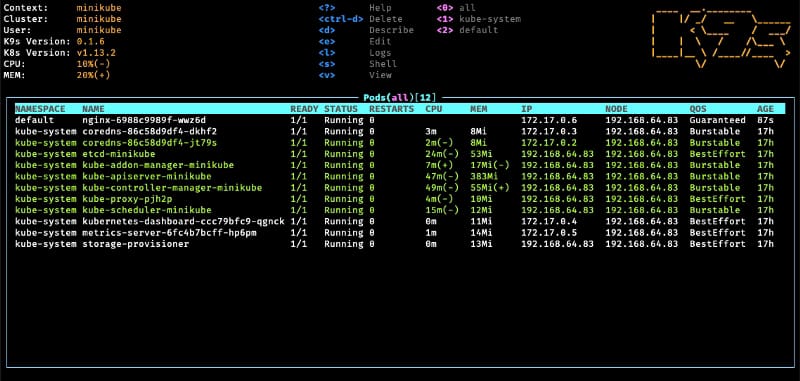

If you need to deal with any Java program in your daily activities for sure you have been in the process of analyzing thread dumps to understand an. unexpected behavior of a Java program. That implies having a stack trace for each of the hundreds of threads that you can get and extract some insights into that data. To help on that process you have fastthread.io that provides an initial analysis focus on the usual key factors such as thread status (blocked, timed_waiting, runnable..) depending on blocking situation, similar stack trace, pool management, CPU consumptions.

It is clearly a must if you need to deal with any Java-based app, at least to have the first analysis to help you focus on anything relevant and apply your wisdom to the preliminary analysis already done in an automated, graph-riched way.

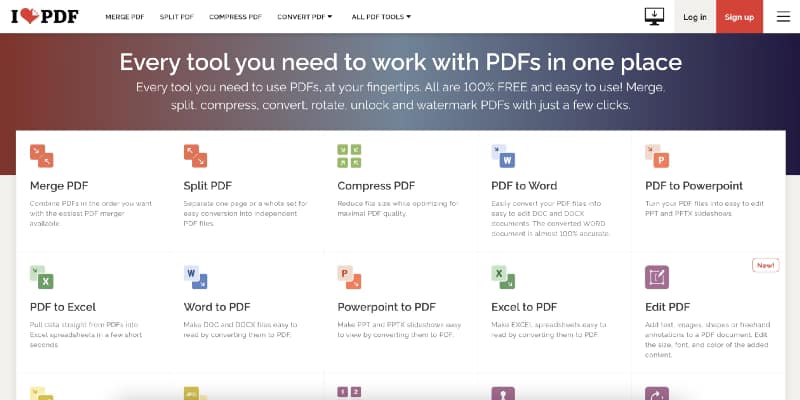

Bonus Track: ilovepdf

As a final addition to this list I could not forget about one app. This is not a geek web app but the app that I used the most, because ilovepdf is a set of webapps covering all your needs regarding the usage of PDF and everything so easy to use and just directly on your browser. ilovepdf provides way to transform your PDF to more editable formats such as Word or Excel but also to be able to split or merge different PDF documents in one, rotate PDF, add watermark, unlock them… and the one that I use the most compress PDF to be able to reduce their size without losing visible quality to send it as an attachment using email.

Summary

I hope these tools will help you to improve your daily process to be more efficient or at least to open your known web apps for some of these tasks if you already have another one and maybe give it a try to see if can be of any benefit for you. If you also have other web apps that you use a lot in your daily process please let me know with your responses to this article.