Introduction

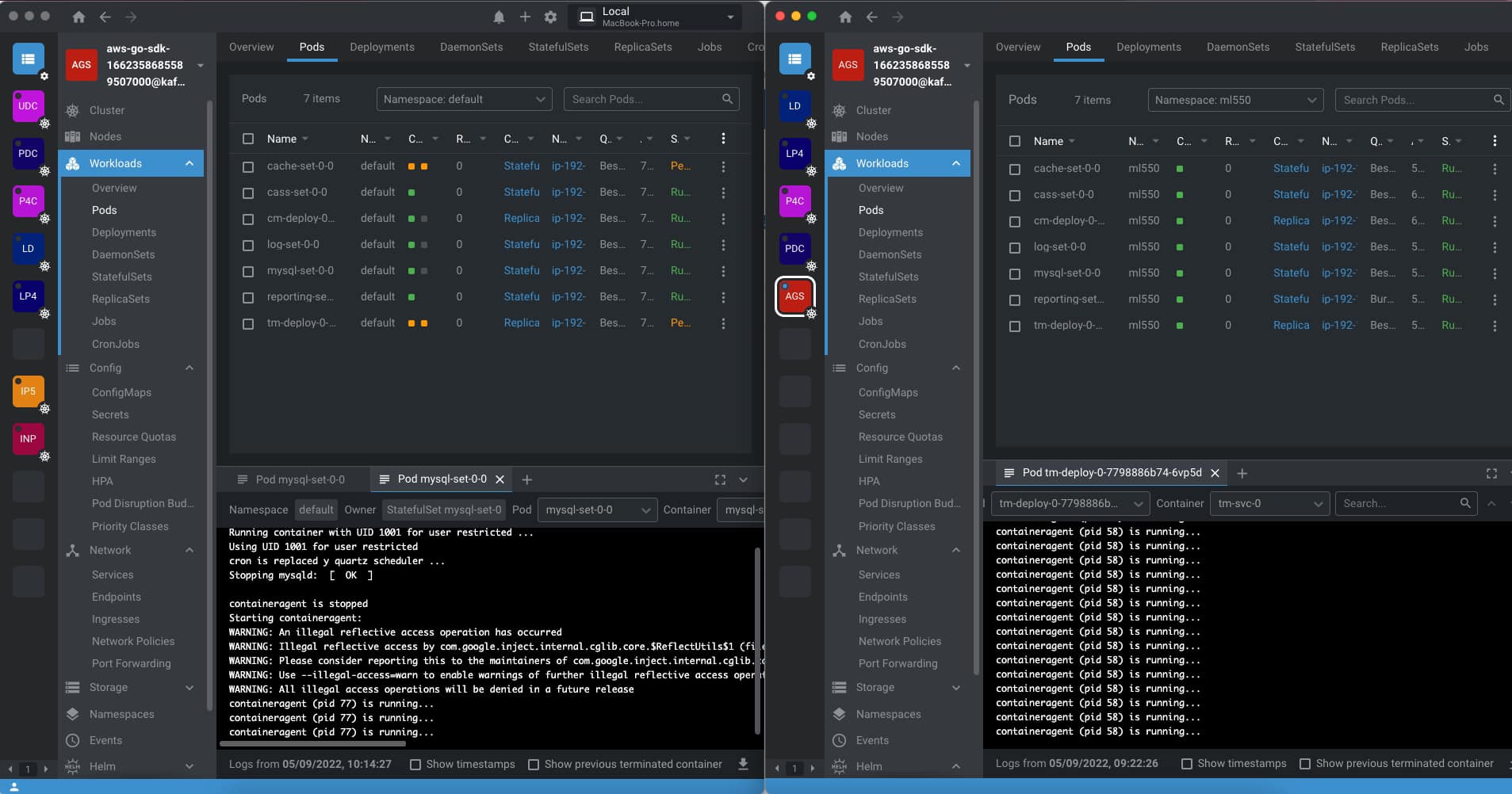

We already talked about Lens several times in different articles but today I am bringing it here OpenLens because after the release of Lens 6 in late July a lot of questions have arrises, especially regarding its change and the relationship with the OpenLens project, so I thought it could be very interesting to bring some of this data all together in the same place so any of you is quite confused. So I would try to explain and answer the main questions you can have at the moment.

What is OpenLens?

OpenLens is the open source project that is behind the code that supports the main functionality of Lens, the software to help you manage and run your Kubernetes Clusters. It is available on GitHub here (https://github.com/lensapp/lens) and it is totally open-source and distributed over an MIT License. In its own words this is the definition:

This repository ("OpenLens") is where Team Lens develops the Lens IDE product together with the community. It is backed by a number of Kubernetes and cloud-native ecosystem pioneers. This source code is available to everyone under the MIT licenseOpenLens vs Lens?

So the main question you could have at the moment is what is the difference between Lens and OpenLens. The main difference is that Lens is built on top of OpenLens including some additional software and libraries with different licenses. It is developed by the Mirantis team (the same company that owns the Docker Enterprise) and it is distributed under a traditional EULA.

Is Lens going to be private?

We need to start by saying that since the beginning Lens has been released under a traditional EULA, so on that front there is not much difference, we can say that OpenLens is Open Source but Lens is Freeware or at least was freeware at that point. But on 28th July we had the release of Lens 6 where the difference between projects started to arise.

As commented on the Mirantis Blog Post a lot of changes and new capabilities have been included but on top of that also the vision has been revealed. As the Mirantis team says they don’t stop at the current level Lens has today to manage the Kubernetes cluster they want to go beyond providing also a Web version of Lens to simplify even more the access, also extend its reach beyond Kubernetes, and so on.

So, you can admit that this is a very compelling vision and very ambitious at the same time and that’s why also they are doing some changes to the license and model, which we are going to talk about below.

Is Lens still free?

We already commented that Lens was always released under a traditional EULA so it was not Open Source like other projects such as its core in OpenLens, but was free to use. With the release on July 28th, this is changing a bit to support their new vision.

They are releasing a new subscription model depending on the usage you are doing of the tool and the approach is very similar to the one they did at the time with Docker Desktop if you remember that we handle that on an article too.

- Lens Personal subscriptions are for personal use, education, and startups (less than $10 million in annual revenue or funding). They are free of charge.

- Lens Pro subscriptions are required for professional use in larger businesses. The pricing is $19.90 per user/month or $199 per user/year.

The new license applied with the release of Lens 6 on 28th July but they have provided a Grace Period until January 2023 so you can adapt to this new model.

Should I stop using Lens now?

This is, as always, up to you, but things are going to be the same until January 2023 and at that point, you need to formalize your situation with Lens and Mirantis. If you are under the situation of a Lens Personal license because you are working for a startup or open-source, you can continue to do so without any problem. If that’s not the case, it is up to the company if the additional features they are providing now and also the vision to the future justify the investment you need to do on the Lens Pro license.

You will always have the option to switch from Lens to OpenLens it will not be 100% the same but the core functionalities and approach at this moment will continue to be the same and the project for sure will be very very active. And also as Mirantis already confirmed in the same blog post: “There are no changes to OpenLens licensing or any other upstream open source projects used by Lens Desktop.” So you cannot expect the same situation happens if you are switching to OpenLens or already using OpenLens.

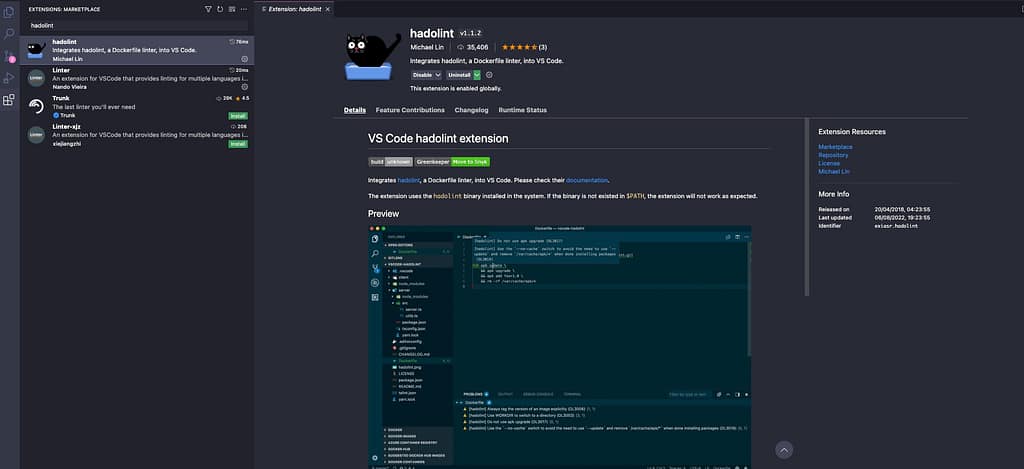

How can I install OpenLens?

Installation of OpenLens is a little bit tricky because you need to generate your build from the source, but to ease that path has been several awesome people that are doing that on their GitHub repositories such as Muhammed Kalkan that is providing a repo with the latest versions with only Open Source components for the major platforms (Windows, macOS X (Intel and Silicon) or Linux) available here:

What Features I am Losing if I switch to OpenLens?

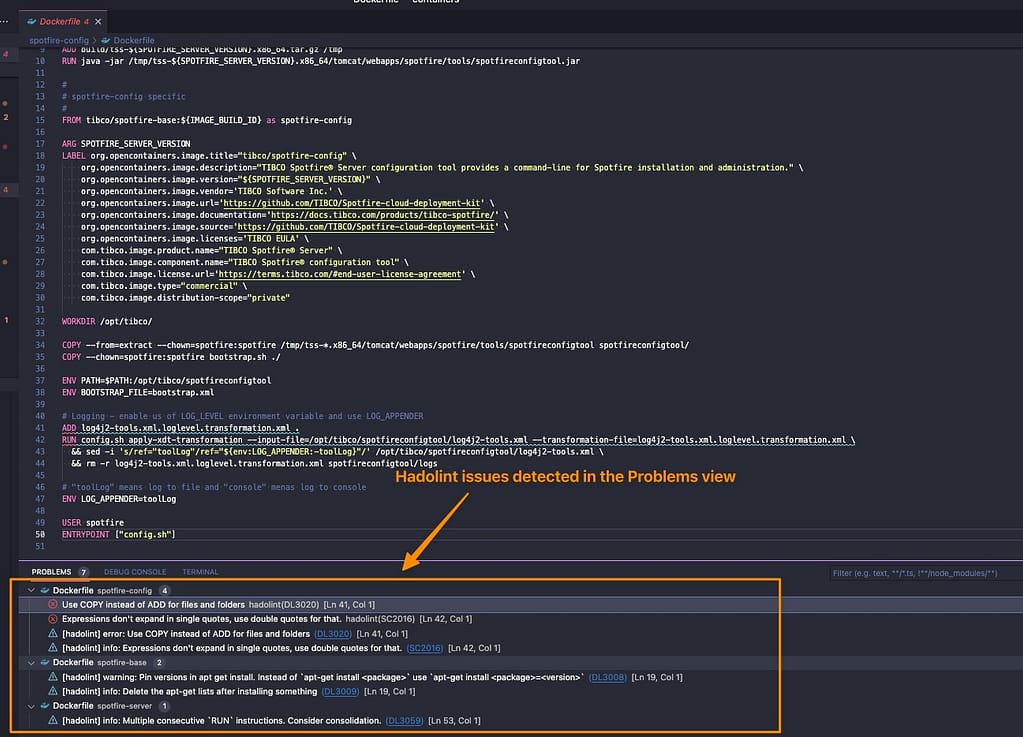

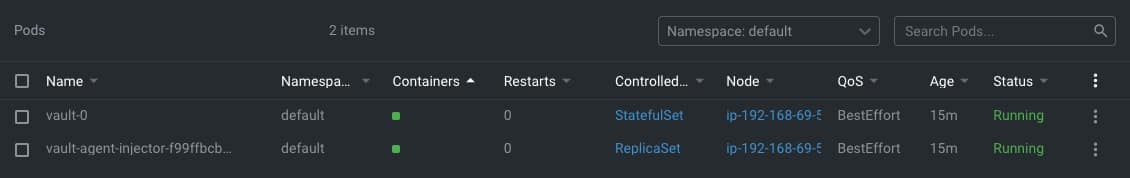

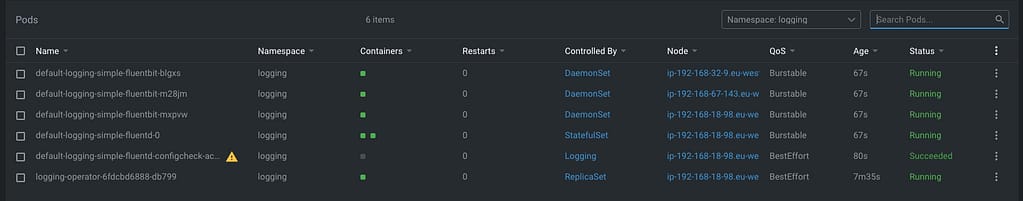

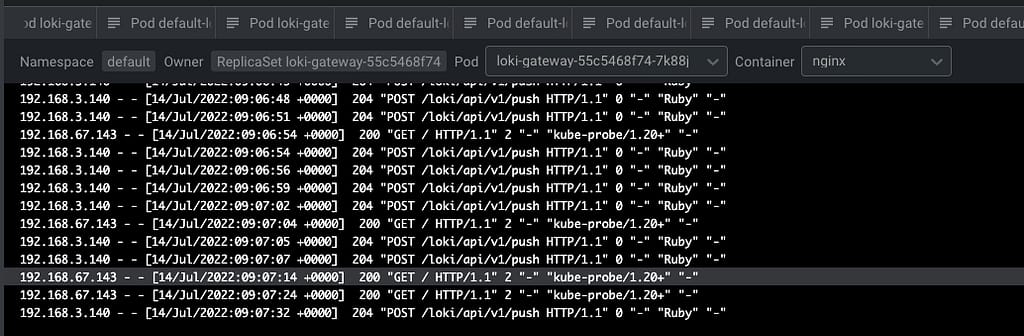

For sure there will be some features that you will be losing if you switch from Lens to OpenLens which are the ones that are provided using the licensed pieces of software. Here we include a non-exclusive list of our experiences using both products:

- Account Synchronization: All the capabilities of having all your Kubernetes Cluster under your Lens Account and sync will not be available on OpenLens. You will rely on the content of the kubeconfig file

- Spaces: The option to have your configuration shared between different users that belongs to the same team is not available on OpenLens.

- Scan Image: One of the new capabilities of the Lens 6 is the option to scan the image of the containers deployed on the cluster, but this is not available on OpenLens.

📚 Want to dive deeper into Kubernetes? This article is part of our comprehensive Kubernetes Architecture Patterns guide, where you’ll find all fundamental and advanced concepts explained step by step.